Learn About Designing Data Lakes in Oracle Cloud

OCI offers a robust and a comprehensive portfolio of infrastructure and cloud platform data and AI services to access, store, and process a wide range of data types from any source. OCI enables your to implement end-to-end, enterprise scale data and AI architectures on the cloud. This solution playbook gives you an overview of the key services that help you build and work with data lakes on OCI. You also learn about other available services, and can design your data lake solutions based on some of our vetted patterns and expert guidance.

Architecture

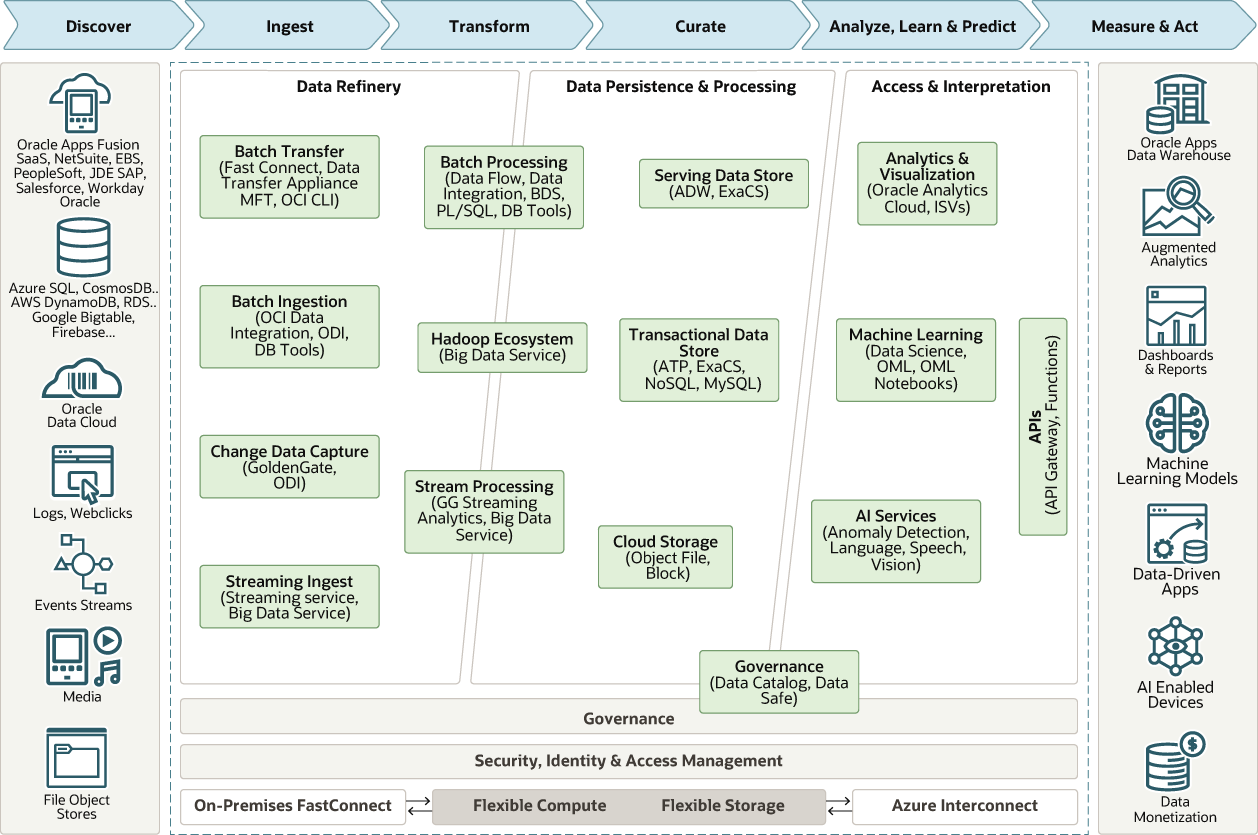

This architecture combines the abilities of a data lake and a data warehouse to process different types of data from a broad range of enterprise data resources. Use this architecture to design end-to-end data lake architectures in OCI.

This diagram shows a high-level architecture of Oracle data and AI services.

Description of the illustration data-lakes.png

In this architecture, the data moves through these stages:

- Data Refinery

Ingests and refines the data for use in each of the data layers in the architecture.

- Data Persistence & Processing (Curated Information Layer)

Facilitates access and navigation of the data to show the current business view. For relational technologies, data may be logically or physically structured in simple relational, longitudinal, dimensional, or OLAP forms. For non-relational data, this layer contains one or more pools of data, either output from an analytical process or data optimized for a specific analytical task.

- Access & Interpretation

Abstracts the logical business view of the data for the consumers. This abstraction facilitates Agile development, migration to the target architecture, and the provision of a single reporting layer from multiple federated sources.

This architecture has the following components:

- Big Data Service

Oracle Big Data Service (BDS) is a fully managed, automated cloud service that provides clusters with a Hadoop environment. BDS makes it easy for customers to deploy Hadoop clusters of all sizes and simplifies the process of making Hadoop clusters both highly available and secure. Based on Oracle's best practices, BDS implements high availability and security, and reduces the need for advanced Hadoop skills. BDS offers the commonly used Hadoop components making it simple for enterprises to move workloads to the cloud and ensures compatibility with on-premises solutions.

Oracle Cloud SQL is an available add-on service that enables customers to initiate Oracle SQL queries on data in HDFS, Kafka, and Oracle Object Storage. Any user, application, or analytics tool can work with data stores to minimize data movement and speed queries. BDS interoperates with data integration, data science, and analysis services, while enabling developers to easily access data using Oracle SQL. Enterprises can eliminate data silos and ensure that data lakes are not isolated from other corporate data sources.

- Data Catalog

Oracle Cloud Infrastructure Data Catalog is a fully managed, self-service data discovery and governance solution for your enterprise data. Data Catalogs are essential to an organization's ability to search and find data to analyze. They help data professionals discover data and support data governance.

Use Data Catalog as a single collaborative environment to manage technical, business, and operational metadata. You can harvest technical metadata from a wide range of supported data sources that are accessible using public or private IP addresses. You can organize, find, access, understand, enrich, and activate this metadata. Utilize on-demand or schedule-based automatic harvesting to ensure the data catalog always has up-to-date information. You benefit from all of the security, reliability, performance, and scale of Oracle Cloud.

-

Data Flow

Oracle Cloud Infrastructure Data Flow is a fully managed service for running Apache Spark applications. Data Flow applications are reusable templates consisting of a Spark application, its dependencies, default parameters, and a default runtime resource specification. You can manage all aspects of Data Flow and the application development lifecycle, tracking and executing Apache Spark jobs using the REST APIs through the API Gateway and available functions.

Data Flow supports rapid application delivery by allowing developers to focus on their application development. It provides log management and a runtime environment to execute applications. You can integrate the applications and workflows and access APIs through the user interface. It eliminates the need for setting up infrastructure, cluster provisioning, software installation, storage, and security.

- Autonomous Data

Warehouse

Oracle Autonomous Data Warehouse is a self-driving, self-securing, self-repairing database service that is optimized for data warehousing workloads. You do not need to configure or manage any hardware, or install any software. Oracle Cloud Infrastructure handles creating the database, as well as backing up, patching, upgrading, and tuning the database.

- Data

Integration

Oracle Cloud Infrastructure Data Integration is a fully managed, serverless cloud service to ingest and transform data for data science and analytics. Data Integration helps simplify your complex data extract, transform, and load processes (ETL/E-LT) into data lakes and warehouses for data science and analytics with Oracle’s Data Flow designer. It provides automated schema drift protection with rule-based integration flow which helps you avoid broken integration flows and reduce maintenance as data schemas evolve.

-

Data Science

Oracle Cloud Infrastructure Data Scienceis a fully managed and serverless platform for data scientists to build, train, and manage machine learning models on Oracle Cloud Infrastructure. Data scientists can use Oracle's Accelerated Data Science (ADS) library enhanced by Oracle for Automated Machine Learning (AutoML), model evaluation, and model explanation.

ADS is a Python library that contains a comprehensive set of data connections, that allows data scientists to access and use data from many different data stores to produce better models. The ADS library supports Oracle’s own AutoML, as well as open-source tools such as H2O.ai and Auto-Sklearn.

Data scientists and infrastructure administrators can easily deploy data science models as Oracle Functions, a highly-scalable, on-demand and serverless architecture on OCI. Team members can use the model catalog to preserve and share completed machine learning models and the artifacts necessary to reproduce, test, and deploy them.

About Data Lakes

A data lake is a scalable, centralized repository that can store raw data and enables an enterprise to store all its data in a cost effective, elastic environment. A data lake provides a flexible storage mechanism for storing raw data. For a data lake to be effective, an organization must examine its specific governance needs, workflows, and tools. Building around these core elements creates a powerful data lake that seamlessly integrates into existing architectures and easily connects data to users.

- Accelerated time to decisions by leveraging analytics and machine learning

- Collection and mining of big data for data scientists, analysts, and developers

To make unstructured data stored in a data lake useful, you must process and prepare it for analysis. This is often challenging if you lack extensive data engineering resources.

The following lists the technical challenges of maintaining on-premises data lakes.

- Upfront costs and lack of flexibility: When organizations build their own on-premises infrastructure, they must plan, procure, and manage the hardware infrastructure, spin up servers, and also deal with outages and downtime.

- Ongoing maintenance costs: When operating an on-premises data lake, mostly manifesting in IT and engineering costs, organizations must account for ongoing maintenance costs. This also includes the costs of patching, maintaining, upgrading, and supporting the underlying hardware and software infrastructure.

- Lack of agility and administrative tasks: IT organizations must provision resources, handle uneven workloads at a large scale, and keep up with the pace of rapidly changing, community-driven, open-source software innovation.

- Complexity of building data pipelines: Data engineers must deal with the complexity of integrating a wide range of tools to ingest, organize, preprocess, orchestrate batch ETL jobs, and query the data stored in the lake.

-

Scalability and suboptimal resource utilization: As your user base grows, your organization must manually manage resource utilization and create additional servers to scale up on demand. Most on-premises deployments of Hadoop and Spark directly tie the compute and storage resources to the same servers creating an inflexible model.

The following lists the business benefits of moving your data lakes to the cloud.

- Lower engineering costs and managed services: Build preintegrated data pipelines more efficiently with cloud-based tools and reduce data engineering costs. Transfer scaling management to your cloud provider using cloud services such as Object Storage and Autonomous Data Warehouse (ADW) that provide transparent scaling. You don't need to add machines or manage clusters on cloud-based data lakes.

- Leverage Agile infrastructure and latest technologies: Design your data lake for new use cases with our flexible, agile, and on-demand cloud infrastructure. You can quickly upgrade to the latest technology and add new cloud services as they become available, without redesigning your architecture.