| Sun Blade X6275 Server Module Service Manual

|

|

Introduction to the Sun Blade X6275 Server Module

Introduction to the Sun Blade X6275 Server Module |

This chapter provides an overview of the features of the Oracle Sun Blade X6275 server module. This chapter also includes Sun Blade X6275 server module specifications.

The following information is included:

1.1 Sun Blade X6275 server module Overview

The following topics are covered:

1.1.1 Product Description

The Sun Blade X6275 server module is a dual-node high-performance computing (HPC) blade. The server blade’s two compute nodes (Node 0 and Node 1) are housed on a single motherboard in a single blade enclosure. The two compute nodes within a Sun Blade X6275 server module blade are identical and symmetric, but are fully independent of each other.

Each of the two nodes in the blade are based on a two-socket Intel Xeon ® platform, which consists of the IOH24, the I/O Controller Hub 10 (ICH10R), and the I/O subsystem. Both compute nodes in the Sun Blade X6275 server module have their own ILOM service processor based on the AST2100 chip.

Each node includes a Sun Flash Module, which provides a reliable and secure boot source for the node

There are two versions of the Sun Blade X6275 server module:

- The Sun Blade X6275 1GbE Server Module is supported in the Sun Blade 6000 modular system chassis and in the Sun Blade 6048 modular system chassis.

- The Sun Blade X6275 IB Server Module is supported in the Sun Blade 6048 modular system chassis.

Each compute node on Sun Blade X6275 IB Server Module has one 4x QDR (Quad Data Rate) InfiniBand (IB) port that interfaces to the Network Express Module (NEM) 1 slot on the Sun Blade modular system chassis midplane, for a total of two IB ports per server module.

1.1.2 Product Features

The Sun Blade X6275 server module product features are listed in TABLE 1-1.

TABLE 1-1 Sun Blade X6275 server module Features

|

Feature

|

Description

|

|

CPU

|

Up to four Intel Xeon Processor 5500 Series Quad-core processors per server module. 8 cores per compute node, for a total of 16 cores per server module.

|

|

Nodes

|

Two independent compute nodes, 0 and 1. The Sun Blade X6275 server module has two symmetric compute nodes, with each independent compute node containing two Intel Xeon Processor 5500 Series processors.

|

|

Memory

|

Twenty-four memory slots per server module (twelve per compute node) Slots support 1333 MHz and 1066 MHz DDR3, ECC registered, DIMMs.

Up to 96 GB of main memory (per compute node) using 8 GB DIMMs

Up to 24 DDR3 DIMMs per blade (12 per node).

Up to 2 DDR3 DIMMs per channel, 3 channels per installed processor.

Up to 48 GB (using 4GB DDR3 DIMMs) per compute node, for a total of 192 GB of memory, per server module, for 8 GB DIMMs.

See DDR3 DIMM Guidelines.

|

|

Video Memory

|

8 MB, Maximum resolution: 1280x1024 pixels

|

|

Flash Modules

|

Two on-board 24 GB Sun Flash Modules (one per compute node).

|

|

USB Drives

|

Two on-board USB 2.0 drive slots (one per compute node).

|

|

Midplane I/O Module Support

|

The following combinations are supported:

- Sun Blade X6275 IB server module installed in a Sun Blade 6048 modular system chassis with SunBlade 6048 Infiniband QDR Switched Network Express Module

- Sun Blade X6275 1GbE server module with integrated GbE support installed into a Sun Blade 6000 or Sun Blade 6048 modular system chassis

|

|

NEMs

|

The following NEMs are supported:

- Gigabit Ethernet (CU) 10-port Passthru Network Express Module (Recommended)

- Sun Blade 6000 Virtualized Multi-Fabric 10GbE Network Express Module

- Sun Blade 6000 Multi-Fabric Network Express Module

- Sun Blade 6048 Infiniband QDR Switched Network Express Module

|

|

Service Processor (SP)

|

Server modules include a service processor (SP) for each compute node. The SP provides IPMI 2.0 compliant remote management capabilities across a broad range of Sun server models. Each server module node’s SP features:

- Integrated Lights Out Manager (ILOM)

- Local ILOM command-line access using serial connection

- 10/100 management Ethernet port to midplane

- Remote keyboard, video, mouse, and storage (KVMS) over IP

See About ILOM for more ILOM information.

|

|

Front Panel I/O

|

Two Universal Connector Ports (UCP), one per compute node, are available for use with the multi-port dongle cable. The dongle cable provides the following interface connections:

- VGA graphics

- Serial port

- Dual USB ports (keyboard/mouse/USB disk)

See Attaching a Multi-Port Dongle Cable.

|

|

Operating systems

|

- Solaris S10U7

- Open Solaris 2009 06+SRU2

- CentOS 5.3 (64-bit)

- SUSE Linux Enterprise Server 10 SP2 (64-bit)

- SUSE Linux Enterprise Server 11 (64-bit)

- Red Hat Enterprise Linux 4.8 (64-bit)

- Red Hat Enterprise Linux 5.3 (64-bit)

- Windows Server 2008 - Datacenter 64-bit

|

|

Chassis Compatibility

|

Sun Blade X6275 server module fits into Sun Blade 6048 and Sun Blade 6000 modular system chassis.

Note - The chassis, the CMM ILOM and the SP ILOM must be compatible. See TABLE 1-2 for details.

|

1.1.3 About ILOM

Sun's Integrated Lights Out Manager (ILOM) resides on an integrated system service processor (SP) in the Sun Blade server modules. Each node contains its own SP ILOM with its own unique IP address.

The chassis also has an ILOM, called the Chassis Management Module (CMM) ILOM, which is used to manage chassis functions.

The SP ILOM can be accessed through its IP address, or through the Chassis Management Module CMM ILOM.

SP ILOM provides full remote KVMS (Keyboard, Video, Mouse, Storage) support. For remote media capabilities, SP ILOM provides remote administration through a browser-based interface, command-line interface (CLI), remote console, SNMP v1, v2c, v3, or IPMI v2.0 protocols.

A system administrator can use the out-of-band management Ethernet, or in-band communication through the server's operating system. A system administrator using out-of-band management can remotely control system power, monitor system FRU status, and load system firmware. Using in-band management, the system administrator can monitor system status and control system power-down.

Refer to the Sun Blade X6275 server module ILOM Supplement for detailed information. For general information, refer to the Integrated Lights Out Manager documentation collection.

1.1.3.1 ILOM 2.0 and ILOM 3.0

The Sun Blade X6275 can be equipped with ILOM 2.0 or ILOM 3.0. The SP ILOM must be matched to the CMM ILOM and the chassis as follows:

TABLE 1-2 ILOM and Chassis Compatability

|

ILOM Level

|

Chassis

|

SP ILOM Firmware

|

CMM ILOM Firmware

|

|

2.0

|

Sun Blade 6048

|

2.0.3.13 or 2.0.3.17

|

2.0.3.13

|

|

3.0

|

Sun Blade 6000 or Sun Blade 6048

|

3.0.4.10

|

3.0.6.11

|

In some instances, ILOM 2.0 and ILOM 3.0 work differently. The differences are noted where they occur. If no difference is noted, they work identically.

- For more on ILOM 2.0, see the Sun Integrated Lights Out Manager 2.0 Supplement for the Sun Blade X6275 Server Module and the Sun Integrated Lights Out Manager (ILOM) 2.0 Documentation collection.

- For more on ILOM 3.0, see the Sun Integrated Lights Out Manager 3.0 Supplement for the Sun Blade X6275 Server Module and the Sun Integrated Lights Out Manager (ILOM) 3.0 Documentation collection.

1.1.3.2 ILOM Node Numbering

A single Sun Blade X6275 server module contains two complete systems, each referred to as a node. Each node has its own SP running its own ILOM.

ILOM 2.0 and ILOM 3.0 number the nodes differently.

ILOM 2.0 Node Numbering

The 2.0 CMM ILOM displays slot addresses as if there are two separate server modules.

- Node 0 is addressed by the actual slot number.

- Node 1 is addressed by the slot number plus N, where N is 12 in a Sun Blade 6048 and 10 in a Sun Blade 6000 chassis. For example, for a server module in slot 6:

- Node 0 is addressed by the actual slot number, 6.

- Node 1 is addressed by:

- slot 18 (the actual slot number plus 12) in a Sun Blade 6048 chassis

- slot 16 (the actual slot number plus 10) in a Sun Blade 6000 chassis

This convention is reflected in the following CMM ILOM features:

- The /SYS/SLOTID target is different for the two blade ILOMs.

- The chassis’ CMM ILOM provides separate targets for each blade. For example, if the blade is in slot 6, then the CMM ILOM provides /CH/BL6 and /CH/BL18 (or /CH/BL16).

- The CMM ILOM web interface provides separate management displays for the two blade ILOMs, using the two slot IDs.

For more information, see the Sun Integrated Lights Out Manager 2.0 Supplement for the Sun Blade X6275 Server Module.

ILOM 3.0 Node Numbering

ILOM 3.0 uses the actual slot number plus the node ID to identify modes.

For example in slot 6, the nodes are:

- Slot 6, node 0

- Slot 6, node 1

The CMM targets for the nodes in slot 6 are:

- /CH/BL6/NODE0 for node 0

- /CH/BL6/NODE1 for node 1

For more information, see the Sun Integrated Lights Out Manager 3.0 Supplement for the Sun Blade X6275 Server Module.

1.1.4 About the Sun Blade Modular System Chassis

Sun Blade X6275 server modules must reside within a Sun Blade 6048 or Sun Blade 6000 modular system chassis.

1.1.4.1 Sun Blade 6048 Modular System Chassis

The Sun Blade 6048 modular system chassis consists of four separate shelves contained within the unibody designed chassis. Up to 12 Sun Blade X6275 server modules can be installed within a single Sun Blade 6048 shelf, up to 48 server modules per Sun Blade 6048 chassis, for a maximum of 96 compute nodes per Sun Blade 6048 modular system chassis.

For more information, refer to the Sun Blade 6048 Modular System Chassis documentation.

| Note - For CMM ILOM 2.0.3.13 systems, a Sun Blade X6275 server module must be inserted into the chassis before you power on. If the blade is not inside the chassis before you power on, ILOM does not recognize node 1. For ILOM 3.0 systems, this requirement does not apply.

|

1.1.4.2 Sun Blade 6000 Modular System Chassis

The Sun Blade 6000 modular system chassis holds up to 10 Sun Blade X6275 server modules for a maximum of 20 compute nodes per Sun Blade 6000 modular system chassis.

For more information, refer to the Sun Blade 6000 Modular System Chassis documentation.

1.1.5 About the Chassis Monitoring Module (CMM)

The Chassis Monitoring Module (CMM) provides a common management interface for each server module and compute node. The CMM is the primary point of management interaction for all shared chassis, components, and functions.

Through their associated ILOM service processors, all individual compute node’s IPMI, HTTPs, CLI (SSH), SNMP, and file transfer interfaces are directly accessible from the Chassis Monitoring Module (CMM) Ethernet management port. Each compute node is assigned an IP address that is used for CMM management. IP addresses for compute nodes are assigned by static or DHCP methods.

| Note - The CMM ILOM firmware must be compatible with the SP ILOM firmware. See TABLE 1-2 for compatability levels.

|

1.2 Specifications

This section contains Sun Blade X6275 server module specifications.

The following topics are covered:

1.2.1 Server Module Boards

The Sun Blade X6275 server module has the following boards installed in the blade. The boards are listed in TABLE 1-3.

TABLE 1-3 Server Module Boards

|

Board

|

Description

|

Reference

|

|

Motherboard

|

The motherboard includes four CPU module sockets, slots for 24 DDR3 DIMMs, slots for flash modules, slots for USB drives, I/O chipsets, and a chassis midplane connector.

|

Replacing the Motherboard Assembly (FRU)

|

|

SP board

|

The service processor board subsystem includes independent integrated lights out management (ILOM) for compute nodes 0 and 1. This SP board is attached to the motherboard and is not separately replaceable.

The service processor (ILOM) subsystem controls the host power and monitors host system events (power and environmental). The service processor (ILOM) subsystem in each compute node is powered up whenever the blade is installed in a chassis that receives AC input power, even when the main power to each compute node is off.

|

Replacing the Motherboard Assembly (FRU)

About ILOM

|

1.2.2 Dimensions

The Sun Blade X6275 server module form factor dimensions are listed in TABLE 1-4.

TABLE 1-4 Sun Blade X6275 server module Dimensions

|

Dimension

|

Sun Blade X6275 server module

|

|

Height

|

327 mm/12.87 inches

|

|

Width

|

43 mm/1.69 inches

|

|

Depth

|

512 mm/20.16 inches

|

|

Weight

|

Maximum: ~20.61 lbs (9.36 kg)

- with 24 4 GB DDR3 DIMMs and 4 Intel Xeon EP processors installed

|

1.2.3 Environmental Specifications

TABLE 1-5 Server Module Environmental Specifications

|

Specification

|

Value

|

|

Temperature (operating)

|

41- 90˚ F

5 - 32˚ C

|

|

Temperature (storage)

|

-40 - 158˚ F

-40 - 70˚ C

|

|

Humidity

|

10%- 90% non-condensing

|

|

Operating altitude

|

0 - 10,000 feet (0 - 3048 m)

|

| Note - System cooling might be affected by dust and contaminant buildup. It is recommended that systems be opened and checked approximately every six months, or more often in dirty operating environments. Check system heat sinks, fans, and air openings. If necessary, clean systems by brushing or blowing contaminants or carefully vacuuming contaminants from the system.

|

1.2.4 Customer Replaceable Units (CRUs) and Field Replaceable Units (FRUs)

This section lists the CRUs and FRUs. Customer Replaceable Units (CRUs) are designed to be replaced by customers. Field Replaceable Units (FRUs) must be replaced by Sun service personnel.

|

Caution - Changing FRUs can damage your equipment and void your warranty.

|

TABLE 1-6 CRU and FRU List (Subject to Change)

|

Part

|

CRU or FRU

|

|

Base Blade

|

|

|

Includes motherboard, SP card, batteries, and cables (no CPU and no memory)

|

FRU

|

|

CPUs

|

|

4 Intel Xeon Quad-Core X5570 - 2.93 GHz, 8MB Cache, 6.4 GT/s QPI, HT, Turbo Boost, 95W, with heat sinks.

|

FRU

|

|

4 Intel Xeon Quad-Core X5560 - 2.80 GHz, 8MB Cache, 6.4 GT/s QPI, HT, Turbo Boost, 95W, with heat sinks.

|

FRU

|

|

4 Intel Xeon Quad-Core E5540 - 2.53 GHz, 8MB Cache, 5.86 GT/s QPI, HT, Turbo Boost, 80W, with heat sinks.

|

FRU

|

|

4 Intel Xeon Quad-Core L5520 - 2.26 GHz, 8MB Cache, 5.86 GT/s QPI, HT, Turbo Boost, 60W, with heat sinks.

|

FRU

|

|

4 Intel Xeon Quad-Core E5520 - 2.26 GHz, 8MB Cache, 5.86 GT/s QPI, HT, TurboBoost, 80W, with heat sinks.

|

FRU

|

|

DIMMs

|

|

2GB DDR3 Memory Kit - 1x2GB 1333MHz DIMM

|

CRU

|

|

4GB DDR3 Memory Kit - 1x4GB 1333MHz DIMM

|

CRU

|

|

8GB DDR3 Memory Kit - 1x 8GB 1066MHz DIMM

|

CRU

|

|

Boot and Storage

|

|

24 GB Sun Flash Modules (FMODs)

|

CRU

|

|

USB Drive (third-party supplied)

|

CRU

|

|

Miscellaneous

|

|

|

CR2032 RTC battery (1 per compute node)

|

CRU

|

|

Service processor

|

FRU

|

|

Cable kit

|

CRU

|

1.2.5 Components and Part Numbers

Supported components and part numbers are subject to change over time. For the most up-to-date list, go to:

https://support.oracle.com/handbook_private/index.html

Click the name and model of your server, and then click Full Components List for the list of components and part numbers.

1.2.6 Accessory Kits

TABLE 1-7 lists the contents of the accessory kit that is shipped with the servers.

TABLE 1-7 Accessory Kit

|

Item

|

Part Number

|

|

Sun Blade X6275 Server Module Installation Guide (printed)

|

820-6977

|

|

Sun Blade X6275 Server Module Getting Started Guide (printed)

|

820-6847

|

|

Additional safety and license documentation

|

|

1.3 Illustrated Parts Breakdown

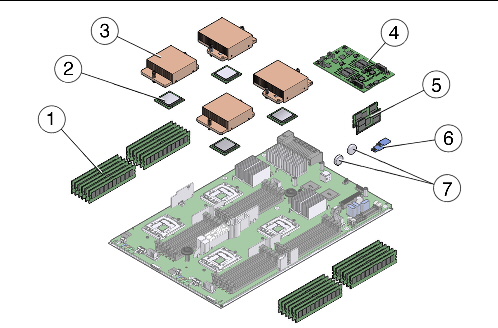

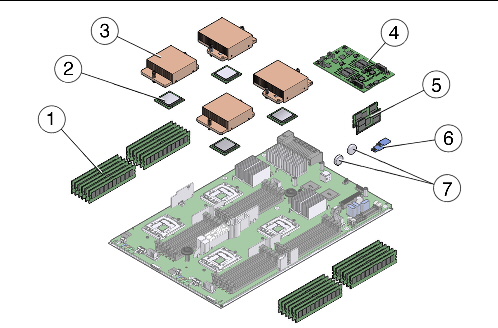

The following illustrations provide exploded views of server module components. Use these illustrations, and the accompanying tables, to identify parts in your system.

FIGURE 1-1 Server Module Components

Figure Legend

|

1

|

DDR3 DIMMs (24 max)

|

CRU

|

5

|

Flash Modules (2 max)

|

FRU

|

|

2

|

CPU (4 max)

|

FRU

|

6

|

USB Drive (2 max)

|

FRU

|

|

3

|

Heat Sink

|

FRU

|

7

|

RTC Battery (2)

|

FRU

|

|

4

|

SP module

|

FRU

|

|

|

|

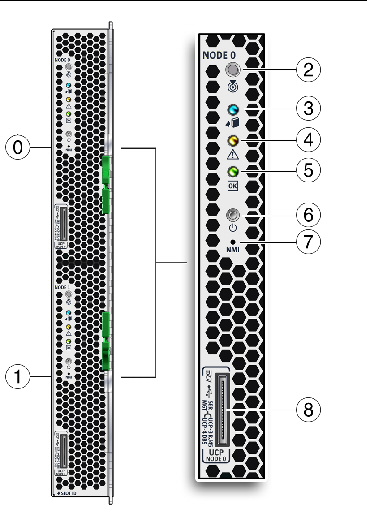

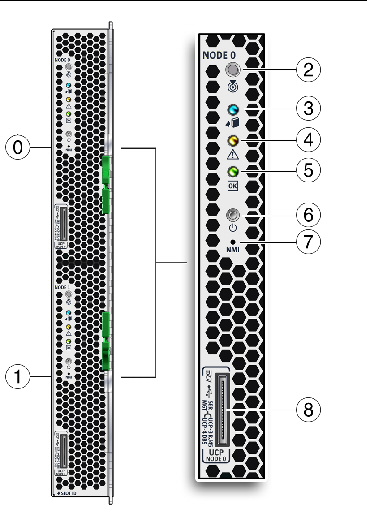

1.4 Sun Blade X6275 server module Front Panel LEDs and Features

FIGURE 1-2 shows front panel features on the Sun Blade X6275 server module.

FIGURE 1-2 Sun Blade X6275 Server Module Front Panel LEDs

| Note - After server module insertion, all front panel LEDs blink three times.

|

Figure Legend Node 0 and Node 1 Server Module LEDs

|

0

|

Node 0

|

|

|

1

|

Node 1

|

|

|

2

|

Server Module Locate LED - White - Use the locate button and locate LED to identify a server module within a fully populated chassis.

- From a remote location: Use the ILOM to turn the locate LED on and off.

- Co-located: Push and release the locate button to make the locate LED blink for 30 minutes (fast blink: 4Hz).

Press the button for more than 4 seconds to light all front panel LEDs for 15 seconds.

|

|

|

3

|

Server Module Ready to Remove LED- Blue

The server module is ready to be removed from the chassis and can be deconfigured from the chassis. This LED is activated using an ILOM remote Remove command. This LED is switched on by the service processor in response to an ILOM command.

LED states:

- Off: Normal Operation. The server module is not ready to remove.

- On: The server module is ready to remove from the chassis.

|

|

|

4

|

Server Module Service Action Required LED- Amber

LED states:

- Off: Normal operation.

- On: Fault (critical and non-critical). When a faulty component, such as a failed DIMM, is identified internally on the server, the Service Action Required LED is also lit.

|

|

|

5

|

Server Module OK LED- Green (blinking or steady on)

LED states:

- Full power- steady on

- Updating- slow blink 0.5 second on, 0.5 second off

- SP booting- fast blink 0.125 second on, 0.125 second off

- Node booting- slow blink 0.5 second on, 0.5 second off

- Standby power- blink: 0.1 second on, 2.9 seconds off.

|

|

|

6

|

Server Module Power/Standby button

- Press momentarily to toggle the server between standby power and full power.

- Press and hold for four seconds (while server is on) to force host power off immediately. ost power-off.

Caution - Pressing the Power/Standby Button for more than 4 seconds when in full power initiates an immediate shutdown to standby power, and could cause data loss.

See Powering Off the Server Module.

See Powering On the Server Module.

|

|

|

7

|

Non-Maskable Interrupt (NMI) button (Service only)

|

|

|

8

|

Universal Connector Port (UCP), used for dongle cable

See Attaching a Multi-Port Dongle Cable.

|

|

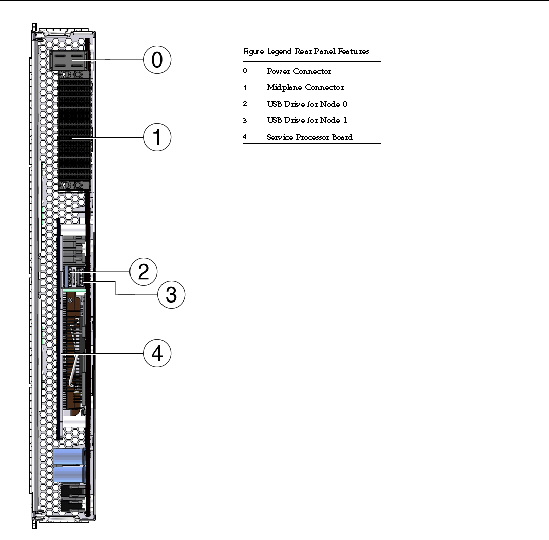

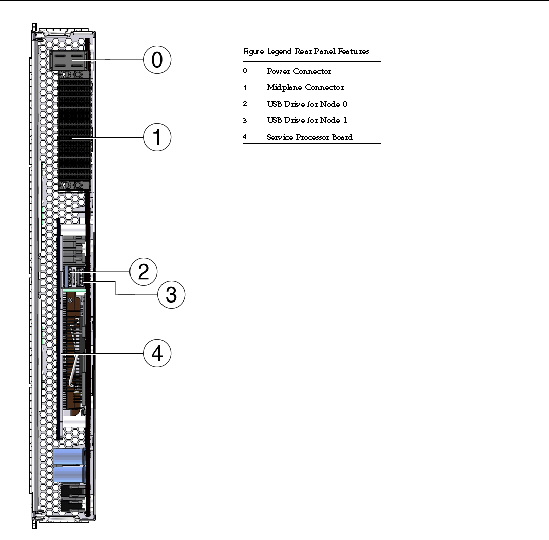

1.5 Sun Blade X6275 server module Rear Panel Features

FIGURE 1-3 shows rear panel features on the Sun Blade X6275 server module.

FIGURE 1-3 Sun Blade X6275 Server Module Rear Panel

| Sun Blade X6275 Server Module Service Manual

|

820-6849-16

|

|

Copyright © 2012, Oracle and/or its affiliates. All rights reserved.

Introduction to the Sun Blade X6275 Server Module

Introduction to the Sun Blade X6275 Server Module