5 Intents

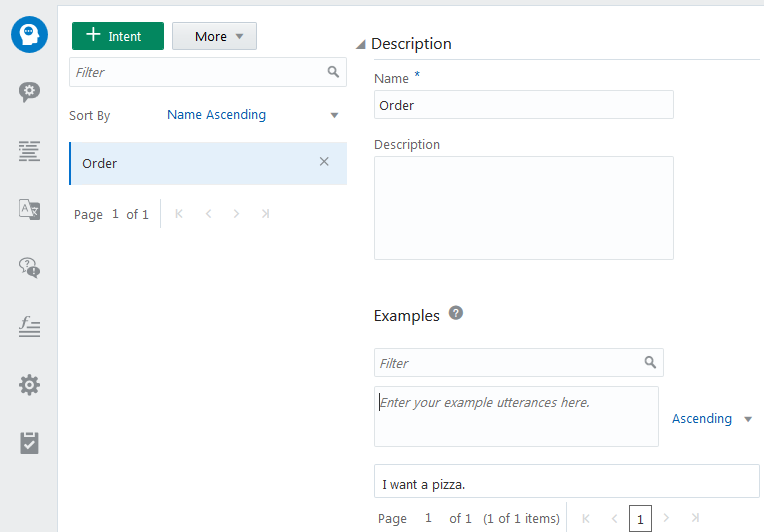

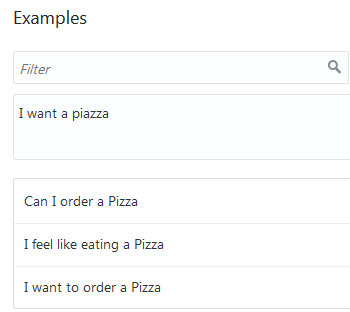

Intents allow your bot to understand what the user wants it to do. An intent categorizes typical user requests by the tasks and actions that your bot performs. The PizzaBot’s OrderPizza intent, for example, labels a direct request, I want to order a Pizza, along with another that implies a request, I feel like eating a pizza.

Create an Intent

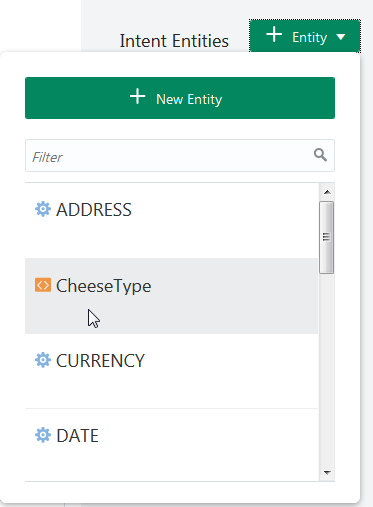

Add Entities to Intents

) or built-in (

) or built-in ( ) entities.

) entities.

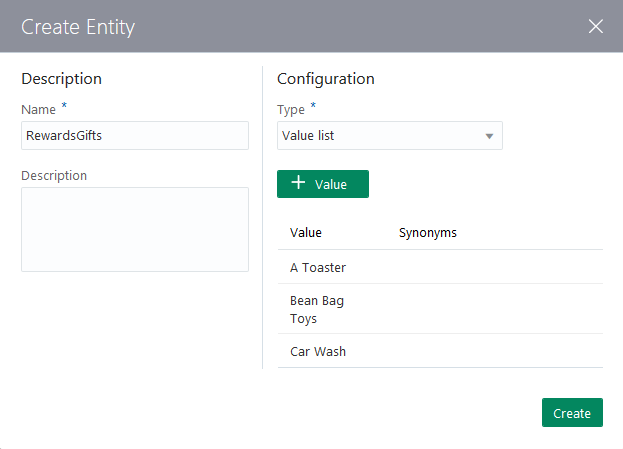

Alternatively, you can click New Entity to add an intent-specific entity. See Custom Entity Types.

Tip:

Only intent entities are included in the JSON payloads that are sent to, and returned by, the Component Service. The ones that aren’t associated with an intent won’t be included, even if they contribute to the intent resolution by recognizing user input. If your custom component accesses entities through entity matches, then be sure to add the entity to your intent. See How Do Custom Components Work?Import Intents from a CSV File

You can add your intents manually, or import them from a CSV file. You can create this file by exporting the intents and entities from another bot, or by creating it from scratch in a spreadsheet program or a text file.

query and topIntent: query,topIntent

I want to order a pizza,OrderPizza

I want a pizza,OrderPizza

I want a pizaa,OrderPizza

I want a pizzaz,OrderPizza

I'm hungry,OrderPizza

Make me a pizza,OrderPizza

I feel like eating a pizza,OrderPizza

Gimme a pie,OrderPizza

Give me a pizza,OrderPizza

pizza I want,OrderPizza

I do not want to order a pizza,CancelPizza

I do not want this,CancelPizza

I don't want to order this pizza,CancelPizza

Cancel this order,CancelPizza

Can I cancel this order?,CancelPizza

Cancel my pizza,CancelPizza

Cancel my pizaa,CancelPizza

Cancel my pizzaz,CancelPizza

I'm not hungry anymore,CancelPizza-

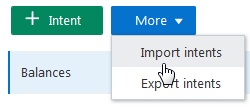

Click Intents (

) in the left navbar.

) in the left navbar.

-

Click More, and then choose Import intents.

-

Select the

.csvfile and then click Open. -

Train your bot.

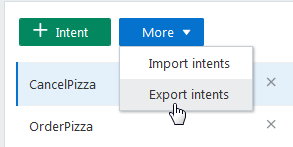

Export Intents to a CSV File

You can reuse your training corpus by exporting it to CSV. You can then import this file to another bot.

-

Click Intents (

) in the left navbar.

) in the left navbar.

-

Click More, and then choose Export intents.

-

Save the file.

Tip:

Remember to train your bot after you import the CSV file.

Intent Training and Testing

Training a model with your training corpus allows your bot to discern what users say (or in some cases, are trying to say).

You can improve the acuity of the cognition through rounds of intent testing and intent training. In Intelligent Bots, you control the training through the intent definitions alone; the bot can’t learn on its own from the user chat.

Test Sets

We recommend that you set aside 20% percent of your corpus for testing your bot and train your bot with the remaining 80%. Keep these two sets separate so that the test set remains “unknown” to your bot.

Apply the 80/20 split to the each intent’s data set. Randomize your utterances before making this split to allow the training models to weigh the terms and patterns in the utterances equally.

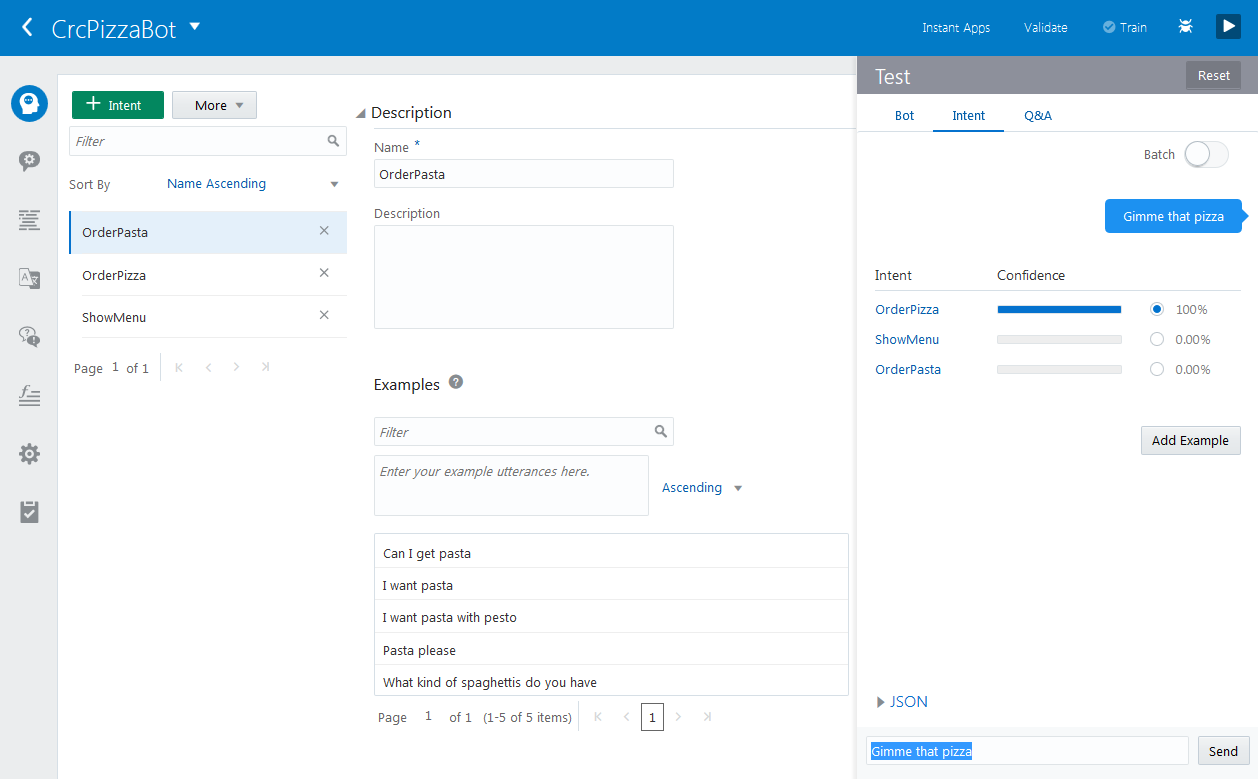

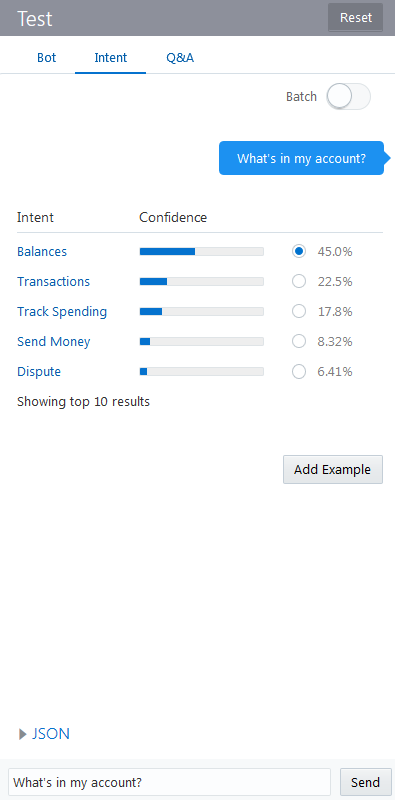

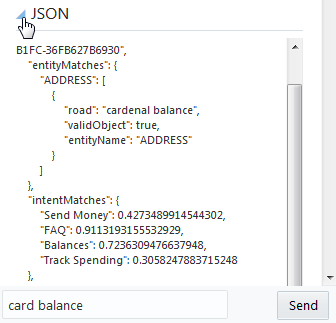

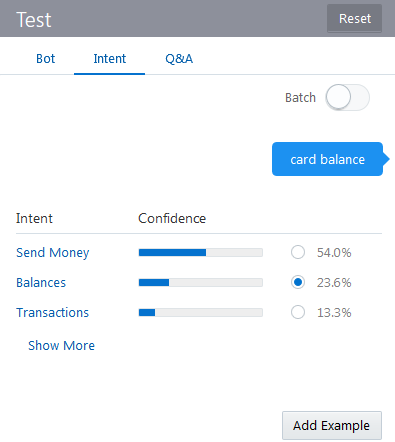

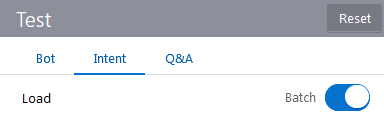

The Intent Tester

The Intent tester is your window into your bot’s cognition. By entering phrases that are not part of the training corpus (the utterances that you’ve maintained in your testing set), you can find out how well you’ve crafted your intents and entities through the ranking and the returned JSON. This ranking, which is the bot’s estimate for the best candidate to resolve the user input, demonstrates its acuity at the current time.

The Intent Testing History

You can export the training data into CSV file so that you can find out how the intents were trained.

By examining these logs in a text editor or spreadsheet program like MicroSoft Excel, you can see each user request and bot reply. You can sort through these logs to see where the bot matched the user request with the right intent and where it didn’t.

Export Intent Data

To capture all of the intent testing data in a log, be sure to enable Intent Conversation in Settings > General before you test your intents.

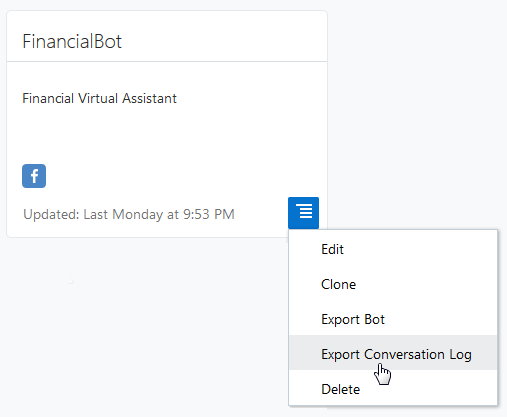

To export data:- In the bots catalog, open the menu in the tile and then click Export Conversation Log.

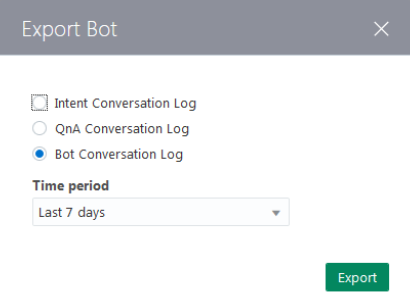

- In the Export Bot dialog, choose the log type (conversation or intent) and a logging period.

- Open the CSV files in a spreadsheet program to review it. You see if your model matches intents consistently by filtering the rows by keyword.

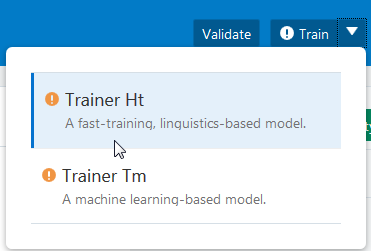

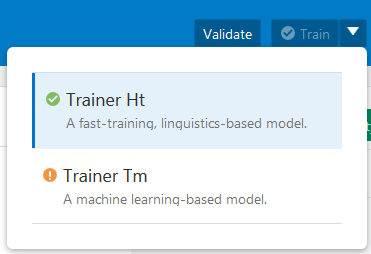

Which Training Model Should I Use?

We provide a duo of models that you can train to mold your bot’s cognition. You can use one or both of these models, each of which uses a different approach to machine learning.

Trainer Ht

Trainer Ht is the default training model. It needs only a small training corpus, so use it as you develop the entities, intents, and the training corpus. When the training corpus has matured to the point where tests reveal highly accurate intent resolution, you’re ready to add a deeper dimension to your bot’s cognition by training Trainer Tm.

You can get a general understanding of how Trainer Ht resolves intents just from the training corpus itself. It forms matching rules from the sample sentences by tagging parts of speech and entities (both custom and built-in) and by detecting words that have the same meaning within the context of the intent. If an intent called SendMoney has both Send $500 to Mom and Pay Cleo $500, for example, Trainer Ht interprets pay as the equivalent to send . After training, Trainer Ht’s tagging reduces these sentences to templates (Send Currency to person, Pay person Currency) that it applies to the user input.

Tip:

Because of its quick training, use Trainer Ht to help you define and refine your training corpus. While you can add sentences to an intent whenever the resolution is faulty (or in the worst case, add your entire testing corpus), be sure to aim for a concise training corpus by following the guidelines in Guidelines for Building Your Training Corpus.Trainer Tm

Because Trainer Tm doesn’t focus as heavily on matching rules as Trainer Ht, it can help your bot interpret user input that falls outside of your training corpus. Trainer Tm differs from Trainer Ht in other ways as well: its intent resolution can be less predictable across training sessions.

Note:

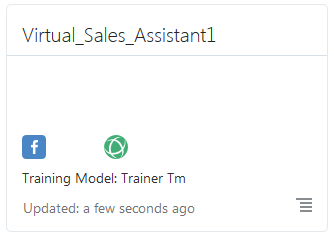

Trainer Ht is the default model, but you can change this by clicking Settings > General and then by choosing another model from the list. The default model displays in the tile in the bot catalog.

Guidelines for Building Your Training Corpus

When you define an intent, you first give it a name that illustrates some user action and then follow up by compiling a set of real-life user statements, or utterances. Collectively, your intents, and the utterances that belong to them, make up a training corpus. The term corpus is just a quick way of saying “all of the intents and sample phrases that I came up with to make this bot smart”. The corpus is the key to your bot’s intelligence. By training a model with your corpus, you essentially turn that model into a reference tool for resolving user input to a single intent. Because your training corpus ultimately plays the key role in deciding which route the bot-human conversation will take, you need to choose your words carefully when building it.

Generally speaking, a large and varied set of sample phrases increases a model’s ability to resolve intents accurately. But building a robust training corpus doesn’t just begin with well-crafted sample phrases; it actually begins with intents that are clearly delineated. Not only should they clearly reflect your use case, but their relationship to their sample sentences should be equally clear. If you’re not sure where a sample sentence belongs, then your intents aren’t distinct from one another.

-

Create 12 to 24 sample phrases per intent (if possible). Keep in mind that the more examples you add, the more resilient your bot becomes.

Important:

Trainer Tm can’t learn from an intent that has only one utterance. -

Avoid sentence fragments and single words. Instead, use complete sentences (which can be up to 255 characters). If you must use single key word examples, choose them carefully.

-

Vary the vocabulary and sentence structure in your sample phrases by one or two permutations using:

-

slang words (moolah, lucre, dough)

-

common expressions (Am I broke? for an intent called AccountBalance)

-

alternate words (Send cash to savings, Send funds to savings, Send money to savings, Transfer cash to savings.)

-

different categories of objects (I want to order a pizza, I want to order some food.

-

alternate spellings (check, cheque)

-

common misspellings (“buisness” for “business”)

-

unusual word order (To checking, $20 send)

-

Create parallel sample phrases for opposing intents. For intents like CancelPizza and OrderPizza, define contrasting sentences like I want to order a pizza and I do not want to order a pizza.

-

When certain words or phrases signify a specific intent, you can increase the probability for a correct match by bulking up the training data not only with the words and phrases themselves, but with synonyms and variations as well. For example, a training corpus for an OrderPizza intent might include a high concentration of “I want to” phrases, like I want to order a Pizza, I want to place an order, and I want to order some food. Use similar verbiage sparingly for other intents, because it might skew the training if used too freely (say, a CancelPizza intent with sample phrases like I want to cancel this pizza, I want to stop this order, and I want to order something else). When the high occurrence of unique words or phrases within an intent’s training set is unintended, however, you should revise the initial set of sentences or use the same verbiage for other intents.

Use different concepts to express the same intent, like I am hungry and Make me a pizza.

-

-

Watch the letter casing: use uppercase when your entities extract proper nouns, like Susan and Texas, but use lowercase everywhere else.

-

Grow the corpus by adding any mismatched sentence to the correct intent.

Tip:

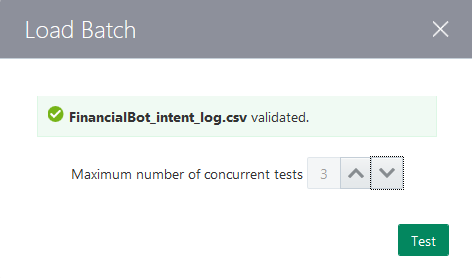

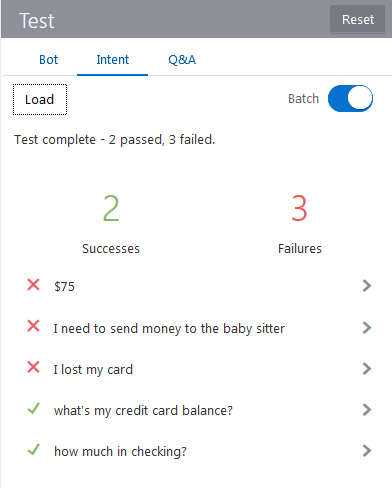

Keep a test corpus as CSV file to batch test intent resolution by clicking More and then Export Intents. Because adding a new intent example can cause regressions, you might end up adding several test phrases to stabilize the intent resolution behavior.Reference Intents in the Dialog Flow

actions property, as shown in the PizzaBot’s intent state. See System.Intent. intent:

component: "System.Intent"

properties:

variable: "iResult"

confidenceThreshold: 0.4

transitions:

actions:

OrderPizza: "resolvesize"

CancelPizza: "cancelorder"

unresolvedIntent: "unresolved"

) and

) and  ) functions.

) functions.

) activates whenever you add an intent or when you update an intent by adding, changing, or deleting its utterances. To bring the training up to date, choose a training model and then click

) activates whenever you add an intent or when you update an intent by adding, changing, or deleting its utterances. To bring the training up to date, choose a training model and then click

) and then send the test phrase again.

) and then send the test phrase again.

) to see how the test results compare to the batch data.

) to see how the test results compare to the batch data.