1.1 Getting Past the 4 Gigabyte Barrier

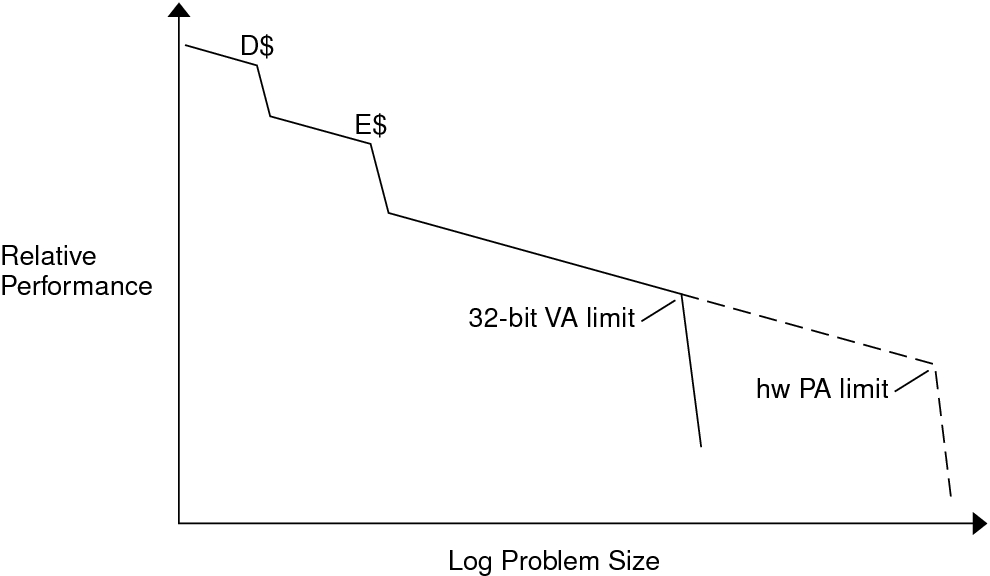

The diagram in Typical Performance and Problem Size Curve shows how an application undergoes a significant drop in performance with increase in problem size on a machine with a large amount of physical memory. For very small problem sizes, the entire program can fit in the data cache (D$) or the external cache (E$). But eventually, the program's data area becomes large enough that the program fills the entire 4 GB virtual address space of a 32-bit application.

Figure 1 Typical Performance and Problem Size Curve

Beyond the 32-bit virtual address limit, applications programmers can still handle large problem sizes. Usually, applications that exceed the 32-bit virtual address limit split the application data set between primary memory and secondary memory, for example, onto a disk. Unfortunately, transferring data to and from a disk drive takes a longer time, in orders of magnitude, than memory-to-memory transfers.

Today, many servers can handle terabytes of physical memory. A single 32-bit application cannot directly address more than 4 GB at a time. However, a 64-bit application can use the 64-bit virtual address space capability to allow up to 18 exabytes (1 exabyte is approximately 1018 bytes) to be directly addressed. Thus, larger problems can be handled directly in primary memory. If the application is multithreaded and scalable, more processors can be added to the system to speed up the application even further. Such applications become limited only by the amount of physical memory in the machine.

-

A greater proportion of a database can live in primary memory.

-

Larger CAD/CAE models and simulations can fit in primary memory.

-

Larger scientific computing problems can fit in primary memory.

-

Web caches can hold more in memory, reducing latency.

For a wide range of applications, the ability to handle larger problems directly in primary memory is the major performance benefit of 64-bit systems. Some of the advantages are as follows: