| Skip Navigation Links | |

| Exit Print View | |

|

Sun Ethernet Fabric Operating System SLB Administration Guide |

Configuring the SLB Topology Example

SLB Configuration Guidelines and Prerequisites

Configuring the SLB-L2 Topology Example

SLB-L2 Configuration Guidelines and Prerequisites

Conditions for a Member Participating in Load Distribution

Set the Traffic Distribution Policy

Restore the Default Traffic Distribution Policy

View the Traffic Distribution Policy

Restore the Default Traffic Distribution Policy

Restore the Default Failover Method

Creating Example SLB Configurations

Creating a Basic SLB Configuration

Save the Current Configuration

Creating a Separate VLAN SLB Configuration

Separate VLAN SLB Configuration

Configuration With Separate VLANs Steps

Save the Current Configuration

Creating a Multiple SLB Group Configuration

Multiple-SLB-Group Configuration

Configuration With Multiple SLB Groups Steps

Set Up the Servers in SLB Group 1

Set Up the Servers in SLB Group 2

Save the Current Configuration

Restart the Server Following Failure

Creating SLB-L2 Configuration Examples

Bump-In-The-Wire Configuration

Creating a Single-Switch Configuration

Basic Single-Switch Configuration

Create a Single-Switch Configuration

Creating a Dual-Switch Configuration

Create a Dual-Switch Configuration

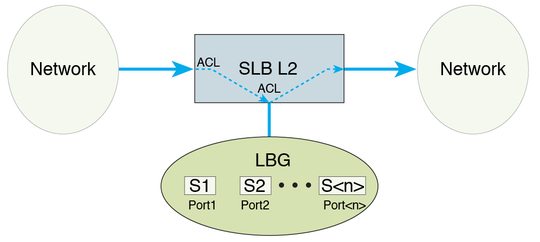

This figure shows the SLB-L2 topology used in this document.

In SLB-L2, all traffic operates within the same subnet, which means that no routing takes place in the load distribution data path. Traffic that needs to be load balanced will first be filtered out by ACL at the ingress port. If data is permitted by the port, ACL redirects the traffic to an LBG. Traffic leaving the switch ports within an LBG can be tagged with a unique VLAN ID before reaching the servers connected to the ports. After the servers process the data, all traffic that enters the switch with the tagged VLAN ID is redirected to an egress port of the switch.

You can configure ingress and egress ports as the same or as different ports, referred to as a bump-in-the-wire configuration, where the servers are the "bumps" in the wire for packet processing. SLB-L2 divides ingress traffic into multiple flows and hashes them into multiple server members. This mechanism enables simultaneous processing of multiple data flows, increasing the overall throughput of the wire.