12 Systems Menu

Note:

Screens indicated with an asterisk (*) are available from the Systems dropdown menu but not from the Systems area of the home screen.- Event Logging

-

Vendor User Profiles: Used for the Supplier Direct Fulfillment module. Available if Use Vendor Portal is selected at the Tenant screen.

- Vendor User Profile: Available if Use Vendor Portal is selected at the Tenant screen.

- Browse Vendor User Profile: Available if Use Vendor Portal is selected at the Tenant screen.

- Proximity Uploads: Available if Use Routing Engine is selected at the Tenant screen.

- *Tenant: advance to either the Tenant (retailer information) screen or the Tenant-Admin screen, depending on whether you are an admin user

- *File Storage History

- *About Order Orchestration

Auto Cancel Unclaimed Pickup Orders History

Purpose: Use the Auto Cancel Unclaimed Pickup Orders History screen to review the auto cancel unclaimed pickup orders jobs that have taken place or the one that is currently running, if any.

Used for the Routing Engine module.

For more information: See Auto Cancel Unclaimed Pickup Orders for an overview on the automatic cancellation process and background information.

How to display this screen: Click the history icon (![]() ) next

to the Auto Cancel Unclaimed Pickup Orders entry for a system at the View Active Schedules screen.

) next

to the Auto Cancel Unclaimed Pickup Orders entry for a system at the View Active Schedules screen.

Note:

- Available if Use Routing Engine is selected at the Tenant screen. Only users with View Active Schedules authority can display this screen. See Roles for more information.

- Available only if the Auto Cancel Unclaimed Pickup Orders schedule is currently enabled, or if there are any auto cancel unclaimed pickup order records that have not been purged.

- History is retained for the number of days specified in the Job History retention setting at the Tenant-Admin screen.

Options at this screen

| Option | Procedure |

|---|---|

| Search for an auto-cancel process |

Use the Job Number, Start Date, or Status fields to restrict the displayed jobs to those that match your entries and click Search:

|

| Understand general errors |

If an error occurred for a process itself, it is displayed in the Error field. |

Fields on this screen

| Field | Description |

|---|---|

| Search fields | |

| Job Number |

A unique ID number assigned by Order Orchestration to identify a job. Note: A job is associated with a single job number, regardless of whether Order Orchestration breaks it out into multiple batches for processing. |

| Start Date |

The date and time when the job started. |

| Status |

Optionally, select a status from the drop down list and click Search to display jobs that are currently in that status. Possible statuses are:

|

| Results fields: | |

| Job Number |

A unique ID number assigned by Order Orchestration to identify a job. Note: A job is associated with a single job number, regardless of whether Order Orchestration breaks it out into multiple batches for processing. |

| Start Date |

The date and time when the job started. |

| End Date |

The date and time when the job ended. |

| Duration |

The amount of time it took for the job to run. HH:MM:SS format, but displayed in MM:SS format if the job took less than an hour. |

| Submitted By |

Indicates how the job was submitted. This could be the ID of the user who submitted or scheduled the process, or the client ID used to authenticate the run job API request message. SYSTEM is displayed here if the job ran successfully through a schedule; however, if a scheduled job was rejected, the ID of the user who scheduled the job is displayed. |

| Status |

Indicates the result of the job:

|

| Error |

Error message, if any. For example, a message is written when the job status is reset, for example: Running job status was reset by user SYSTEM on 11/28/2021 04:29 PM. Up to 255 positions. See Auto Cancel Unclaimed Pickup Orders for background and troubleshooting information. |

Completed Order Private Data Purge History

Purpose: Use the Completed Order Private Data Purge History screen to review the completed order data purge jobs that have taken place or the one that is currently running, if any.

For more information: See Completed Order Private Data Purge for an overview on the email notifications process and background information.

How to display this screen: Click the history icon (![]() ) next

to the Completed Order Private Data Purge entry at the View Active Schedules screen.

) next

to the Completed Order Private Data Purge entry at the View Active Schedules screen.

Note:

- Only users with View Active Schedules authority can display this screen. See Roles for more information.

- History is retained for the number of days specified in the Job History retention setting at the Tenant-Admin screen.

Options at this screen

| Option | Procedure |

|---|---|

| Search for a daily cleanup process |

Use the Job Number, Start Date, or Status fields to restrict the displayed jobs to those that match your entries and click Search:

|

| Understand general errors |

If an error occurred for a process itself, it is displayed in the Error field. |

Fields on this screen

| Field | Description |

|---|---|

| Search fields | |

| Job Number |

A unique ID number assigned by Order Orchestration to identify a job. Note: A job is associated with a single job number, regardless of whether Order Orchestration breaks it out into multiple batches for processing. |

| Start Date |

The date and time when the job started. |

| Status |

Optionally, select a status from the drop down list and click Search to display jobs that are currently in that status. Possible statuses are:

|

| Results fields: | |

| Job Number |

A unique ID number assigned by Order Orchestration to identify a job. Note: A job is associated with a single job number, regardless of whether Order Orchestration breaks it out into multiple batches for processing. |

| Start Date |

The date and time when the job started. |

| End Date |

The date and time when the job ended. |

| Duration |

The amount of time it took for the job to run. HH:MM:SS format, but displayed in MM:SS format if the job took less than an hour. |

| Submitted By |

Indicates how the job was submitted. Set to the user ID of the user who submitted the job, set to SYSTEM if it ran as scheduled, or set to the client ID used to authenticate the run job API request message. |

| Status |

Indicates the result of the job:

|

| Error |

Error message, if any. For example, a message is written when the job status is reset, for example: Running job status was reset by user SYSTEM on 11/28/2021 04:29 PM. Up to 255 positions. See Email Notifications for background and troubleshooting information. |

Daily Clean Up Job History

Purpose: Use the Daily Clean Up Job History screen to review the daily cleanup jobs that have taken place or the one that is currently running, if any.

For more information: See Daily Cleanup for an overview on the email notifications process and background information.

How to display this screen: Click the history icon (![]() ) next

to the Daily Clean Up entry at the View Active Schedules screen.

) next

to the Daily Clean Up entry at the View Active Schedules screen.

Note:

- Only users with View Active Schedules authority can display this screen. See Roles for more information.

- History is retained for the number of days specified in the Job History retention setting at the Tenant-Admin screen.

Options at this screen

| Option | Procedure |

|---|---|

| Search for a daily cleanup process |

Use the Job Number, Start Date, or Status fields to restrict the displayed jobs to those that match your entries and click Search:

|

| Understand general errors |

If an error occurred for a process itself, it is displayed in the Error field. |

Fields on this screen

| Field | Description |

|---|---|

| Search fields | |

| Job Number |

A unique ID number assigned by Order Orchestration to identify a job. Note: A job is associated with a single job number, regardless of whether Order Orchestration breaks it out into multiple batches for processing. |

| Start Date |

The date and time when the job started. |

| Status |

Optionally, select a status from the drop down list and click Search to display jobs that are currently in that status. Possible statuses are:

|

| Results fields: | |

| Job Number |

A unique ID number assigned by Order Orchestration to identify a job. Note: A job is associated with a single job number, regardless of whether Order Orchestration breaks it out into multiple batches for processing. |

| Start Date |

The date and time when the job started. |

| End Date |

The date and time when the job ended. |

| Duration |

The amount of time it took for the job to run. HH:MM:SS format, but displayed in MM:SS format if the job took less than an hour. |

| Submitted By |

Indicates how the job was submitted. Set to the user ID of the user who submitted the job, set to SYSTEM if it ran as scheduled, or set to the client ID used to authenticate the run job API request message. |

| Status |

Indicates the result of the job:

|

| Error |

Error message, if any. For example, a message is written when the job status is reset, for example: Running job status was reset by user SYSTEM on 11/28/2021 04:29 PM. Up to 255 positions. See Email Notifications for background and troubleshooting information. |

Email Notifications Job History

Purpose: Use the Email Notifications Job History screen to review the email notifications jobs that have taken place or the one that is currently running, if any.

Used for the Routing Engine module.

For more information: See Email Notifications for an overview on the email notifications process and background information.

How to display this screen: Click the history icon (![]() ) next

to the Email Notifications entry at the View Active Schedules screen.

) next

to the Email Notifications entry at the View Active Schedules screen.

Note:

- Only users with View Active Schedules authority can display this screen. See Roles for more information.

- History is retained for the number of days specified in the Job History retention setting at the Tenant-Admin screen.

Options at this screen

| Option | Procedure |

|---|---|

| Search for an email notifications process |

Use the Job Number, Start Date, or Status fields to restrict the displayed jobs to those that match your entries and click Search:

|

| Understand general errors |

If an error occurred for a process itself, it is displayed in the Error field. |

Fields on this screen

| Field | Description |

|---|---|

| Search fields | |

| Job Number |

A unique ID number assigned by Order Orchestration to identify a job. Note: A job is associated with a single job number, regardless of whether Order Orchestration breaks it out into multiple batches for processing. |

| Start Date |

The date and time when the job started. |

| Status |

Optionally, select a status from the drop down list and click Search to display jobs that are currently in that status. Possible statuses are:

|

| Results fields: | |

| Job Number |

A unique ID number assigned by Order Orchestration to identify a job. Note: A job is associated with a single job number, regardless of whether Order Orchestration breaks it out into multiple batches for processing. |

| Start Date |

The date and time when the job started. |

| End Date |

The date and time when the job ended. |

| Duration |

The amount of time it took for the job to run. HH:MM:SS format, but displayed in MM:SS format if the job took less than an hour. |

| Submitted By |

Indicates how the job was submitted. Set to SYSTEM if the job ran as scheduled, or to the client ID used to authenticate the run job API request message. |

| Status |

Indicates the result of the job:

|

| Error |

Error message, if any. For example, a message is written when the job status is reset, for example: Running job status was reset by user SYSTEM on 11/28/2021 04:29 PM. Up to 255 positions. See Email Notifications for background and troubleshooting information. |

Generate Pickup Reminder Email History

Purpose: Use the Generate Pickup Reminder Email History screen to review summary information on the pickup reminder email generation for an organization.

Used for the Routing Engine module.

How to display this screen: Click the history icon (![]() ) next

to the Generate Pickup Ready Reminder Emails job for an organization

at the View Active Schedules screen.

) next

to the Generate Pickup Ready Reminder Emails job for an organization

at the View Active Schedules screen.

Note:

- Only users with View Active Schedules authority can display this screen. See Roles for more information.

- Available only if the Generate Pickup Ready Reminder Emails job is currently enabled, or if there are any email generation history records that have not been purged.

- If the Generate Pickup Reminder Email History screen was already open in another tab when you clicked the history icon, you advance to this screen with the pickup reminder email history of the previously-selected organization displayed.

- History is retained for the number of days specified in the Job History retention setting at the Tenant-Admin screen.

For more information: See the Store Connect Overview for background.

Options at this screen

| Option | Procedure |

|---|---|

| Search for an reminder email history record |

Use the Job Number, Start Date, or Status fields to restrict the displayed jobs to those that match your entries and click Search:

|

| Understand general errors |

If an error occurred for the process itself, it is displayed in the Error field. |

Fields at this screen

| Field | Description |

|---|---|

| Summary fields: | |

| Organization |

The code identifying the organization that generated the extract process, and the description of the organization. |

| Search fields: | |

| Job Number |

A unique ID number assigned by Order Orchestration to identify a job. Optionally, enter a number and click Search to display this process only. |

| Start Date |

Optionally, enter a date to display jobs that started on that date. |

| Status |

Optionally, select a status of:

|

| Results fields: |

Note: History is retained for the number of days specified in the Job History retention setting at the Tenant-Admin screen. |

| Job Number |

A unique number assigned by Order Orchestration to identify a job. |

| Start Date |

The date and time when the process started. |

| End Date |

The date and time when the process ended. |

| Duration |

The amount of time it took for the job to run. HH:MM:SS format, but displayed in MM:SS format if the job took less than an hour. |

| Submitted By |

Indicates how the export was submitted. This could be the ID of the user who submitted or scheduled the process, or the client ID used to authenticate the run job API request message. SYSTEM is displayed here if the job ran successfully through a schedule; however, if a scheduled job was rejected, the ID of the user who scheduled the job is displayed. |

| Status |

Indicates whether any errors occurred for the process:

|

| Error |

The error that occurred, if any. |

| Emails Generated |

The total number of pickup reminder emails that were generated. |

Fulfilled Inventory Export History

Purpose: Use the Fulfilled Inventory Export History screen to review the fulfilled inventory exports that have taken place or are currently running.

Used for the Routing Engine module.

For more information: See Fulfilled Inventory Export for an overview on the fulfilled inventory export process and background information.

How to display this screen: Click the history icon (![]() ) next

to the Fulfilled Inventory Export entry for a system at the View Active Schedules screen.

) next

to the Fulfilled Inventory Export entry for a system at the View Active Schedules screen.

Note:

- Available if Use Routing Engine is selected at the Tenant screen. Only users with View Active Schedules authority can display this screen. See Roles for more information.

- Available only if the Fulfilled Inventory Export is currently enabled, or if there are any fulfilled inventory export records that have not been purged.

- If the Fulfilled Inventory Export History screen was already open in another tab when you clicked the history icon, you advance to this screen with the fulfilled inventory export history of the previously-selected organization and system displayed.

- History is retained for the number of days specified in the Job History retention setting at the Tenant-Admin screen.

Options at this screen

| Option | Procedure |

|---|---|

| Search for an export process |

Use the Job Number, Start Date, or Status fields to restrict the displayed exports to those that match your entries and click Search:

|

| Understand general errors |

If an error occurred for an export process itself, it is displayed in the Error field. |

Fields on this screen

| Field | Description |

|---|---|

| Organization |

The code identifying the organization associated with the export you selected at the View Active Schedules screen is displayed. The description of the organization is to the right. |

| System |

The code identifying the system associated with the export you selected at the View Active Schedules screen is displayed. The description of the system is to the right. |

| Search fields | |

| Job Number |

A unique ID number assigned by Order Orchestration to identify an export process. Note: An export is associated with a single job number, regardless of whether Order Orchestration breaks it out into multiple batches for processing. |

| Start Date |

The date and time when the export process started. |

| Status |

Optionally, select an export status from the drop down list and click Search to display fulfillment exports that are currently in that status. Possible statuses are:

|

| Results fields: | |

| Job Number |

A unique ID number assigned by Order Orchestration to identify an export process. Note: An export is associated with a single job number, regardless of whether Order Orchestration breaks it out into multiple batches for processing. |

| Start Date |

The date and time when the export process started. |

| End Date |

The date and time when the export process ended. |

| Duration |

The amount of time it took for the export to run. HH:MM:SS format, but displayed in MM:SS format if the export took less than an hour. |

| Submitted By |

Indicates how the export was submitted. Set to the user ID of the user who submitted the job, set to SYSTEM if it ran as scheduled, or to the client ID used to authenticate the run job API request message. SYSTEM is displayed here if the job ran successfully through a schedule; however, if a scheduled job was rejected, the ID of the user who scheduled the job is displayed. |

| Status |

Indicates the result of the fulfillment export process:

|

| Error |

Error message, if any. For example, a message is written when the job status is reset, for example: Running job status was reset by user SYSTEM on 11/28/2018 04:29 PM. Up to 255 positions. See Fulfilled Inventory Export for background and troubleshooting information. |

| Inventory Records |

The total number of fulfilled inventory records in the export. |

| Inventory Errors |

The total number of fulfilled inventory records that failed to export. See Fulfilled Inventory Export for background and troubleshooting information. |

Incremental Imports History

Purpose: Use Incremental Imports History screen to review the incremental inventory imports that have taken place or are currently running.

Used for the Routing Engine module.

For more information: See Incremental Inventory Import for an overview on the incremental inventory import process and background information.

How to display this screen: Click the history icon (![]() ) next

to an Incremental Inventory Import entry for a system at the View Active Schedules screen.

) next

to an Incremental Inventory Import entry for a system at the View Active Schedules screen.

Note:

- Available if Use Routing Engine is selected at the Tenant screen. Only users with View Active Scheduled authority can display this screen. See Roles for more information.

- Available only if the Incremental Inventory Import is currently enabled, or if there are any incremental inventory import records that have not been purged.

- If the Incremental Imports History screen was already open in another tab when you clicked the history icon, you advance to this screen with the incremental imports history of the previously-selected organization and system displayed.

- History is retained for the number of days specified in the Job History retention setting at the Tenant-Admin screen.

Options at this screen

| Option | Procedure |

|---|---|

| Search for an import process |

Use the Job Number, Start Date, or Status fields to restrict the displayed imports to those that match your entries and click Search:

|

| Understand general errors |

If an error occurred for an import process itself, it is displayed in the Error field. |

Fields on this screen

| Field | Description |

|---|---|

| Organization |

The code identifying the organization associated with the import you selected at the View Active Schedules screen is displayed. The description of the organization is to the right. |

| System |

The code identifying the system associated with the import you selected at the View Active Schedules screen is displayed. The description of the system is to the right. |

| Search fields | |

| Job Number |

A unique ID number assigned by Order Orchestration to identify an import process. Note: An import is associated with a single job number, regardless of whether Order Orchestration breaks it out into multiple batches for processing. |

| Start Date |

The date and time when the import process started. |

| Status |

Optionally, select an import status from the drop down list and click Search to display incremental imports that are currently in that status. Possible statuses are:

|

| Results fields: | |

| Job Number |

A unique ID number assigned by Order Orchestration to identify an import process. Note: An import is associated with a single job number, regardless of whether Order Orchestration breaks it out into multiple batches for processing. |

| Start Date |

The date and time when the import process started. |

| End Date |

The date and time when the import process ended. |

| Duration |

The amount of time it took for the incremental import to run. HH:MM:SS format, but displayed in MM:SS format if the import took less than an hour. |

| Submitted By |

Indicates how the import was submitted. This could be the ID of the user who submitted or scheduled the process, or the client ID used to authenticate the run job API request message. SYSTEM is displayed here if the job ran successfully through a schedule; however, if a scheduled job was rejected, the ID of the user who scheduled the job is displayed. |

| Status |

Indicates the result of the incremental import process:

|

| Error |

Error message, if any. For example, a message is written when the job status is reset, for example: Running job status was reset by user SYSTEM on 11/28/2021 04:29 PM. Up to 255 positions. See Incremental Inventory Import for background and troubleshooting information. |

| Inventory Records |

The total number of inventory records in the import. |

| Inventory Errors |

The total number of inventory records that failed to import. See Incremental Inventory Import for background and troubleshooting information. |

Identity Cloud User Synchronization History

Purpose: Use Identity Cloud User Synchronization History screen to review the identity cloud user synchronization jobs that have taken place or are currently running. The screen displays up to 50 records.

For more information: See Identity Cloud User Synchronization for an overview on the identity cloud user synchronization job and background information.

How to display this screen: Click the history icon (![]() ) next

to an Identity Cloud User Synchronization job at the View Active Schedules screen.

) next

to an Identity Cloud User Synchronization job at the View Active Schedules screen.

Options at this screen

Note:

- Only users with View Active Scheduled authority can display this screen. See Roles for more information.

- Available only if there are any identity cloud service synchronization records that have not been purged.

- History is retained for the number of days specified in the Job History retention setting at the Tenant-Admin screen.

| Option | Procedure |

|---|---|

| Search for a synchronization job |

Use the Job Number, Start Date, or Status fields to restrict the displayed synchronization jobs to those that match your entries and click Search:

|

| Understand general errors |

If an error occurred for a synchronization job itself, it is displayed in the Error field. |

Fields on this screen

| Field | Description |

|---|---|

| Search fields | |

| Job Number |

A unique ID number assigned by Order Orchestration to identify a synchronization job. Note: A job is associated with a single job number, regardless of whether Order Orchestration breaks it out into multiple batches for processing. |

| Start Date |

The date and time when the synchronization job started. |

| Status |

Optionally, select a job status from the drop down list and click Search to display jobs that are currently in that status. Possible statuses are:

|

| Results fields: | |

| Job Number |

A unique ID number assigned by Order Orchestration to identify a synchronization job. Note: A job is associated with a single job number, regardless of whether Order Orchestration breaks it out into multiple batches for processing. |

| Start Date |

The date and time when the job started. |

| End Date |

The date and time when the job ended. |

| Duration |

The amount of time it took for the job to run. HH:MM:SS format, but displayed in MM:SS format if the job took less than an hour. |

| Submitted By |

Indicates how the job was submitted. This could be the ID of the user who submitted or scheduled the job, or the client ID used to authenticate the run job API request message. |

| Status |

Indicates the result of the synchronization job:

|

| Error |

Error message, if any. For example, a message is written when the job status is reset, for example: Running job status was reset by user SYSTEM on 11/28/2021 04:29 PM. Up to 255 positions. Identity Cloud User Synchronization |

| Inventory Records |

The total number of records in the synchronization. |

| Inventory Errors |

The total number of records that failed to synchronize. See Identity Cloud User Synchronization for background and troubleshooting information. |

Inventory Quantity Export History

Purpose: Use the Inventory Quantity Export History screen to review the inventory quantity exports that have taken place or are currently running.

Used for the Routing Engine module.

For more information: See Fulfilled Inventory Quantity Export for an overview on the inventory quantity export process and background information.

How to display this screen: Click the history icon (![]() ) next

to the Inventory Quantity Export entry for a system at the View Active Schedules screen.

) next

to the Inventory Quantity Export entry for a system at the View Active Schedules screen.

Note:

- Available if Use Routing Engine is selected at the Tenant screen. Only users with View Active Schedules authority can display this screen. See Roles for more information.

- Available only if the Fulfilled Inventory Quantity Export is currently enabled, or if there are any inventory quantity export records that have not been purged.

- If the Inventory Quantity Export History screen was already open in another tab when you clicked the history icon, you advance to this screen with the inventory quantity export history of the previously-selected organization and system displayed.

- History is retained for the number of days specified in the Job History retention setting at the Tenant-Admin screen.

Options at this screen

| Option | Procedure |

|---|---|

| Search for an export process |

Use the Job Number, Start Date, or Status fields to restrict the displayed exports to those that match your entries and click Search:

|

| Understand general errors |

If an error occurred for an export process itself, it is displayed in the Error field. |

Fields on this screen

| Field | Description |

|---|---|

| Organization |

The code identifying the organization associated with the export you selected at the View Active Schedules screen is displayed. The description of the organization is to the right. |

| System |

The code identifying the system associated with the export you selected at the View Active Schedules screen is displayed. The description of the system is to the right. |

| Search fields | |

| Job Number |

A unique ID number assigned by Order Orchestration to identify an export process. Note: An export is associated with a single job number, regardless of whether Order Orchestration breaks it out into multiple batches for processing. |

| Start Date |

The date and time when the export process started. |

| Status |

Optionally, select an export status from the drop down list and click Search to display inventory quantity exports that are currently in that status. Possible statuses are:

|

| Results fields: | |

| Job Number |

A unique ID number assigned by Order Orchestration to identify an export process. Note: An export is associated with a single job number, regardless of whether Order Orchestration breaks it out into multiple batches for processing. |

| Start Date |

The date and time when the export process started. |

| End Date |

The date and time when the export process ended. |

| Duration |

The amount of time it took for the export to run. HH:MM:SS format, but displayed in MM:SS format if the export took less than an hour. |

| Submitted By |

Indicates how the export was submitted. This could be the ID of the user who submitted or scheduled the process, or the client ID used to authenticate the run job API request message. SYSTEM is displayed here if the job ran successfully through a schedule; however, if a scheduled job was rejected, the ID of the user who scheduled the job is displayed. |

| Status |

Indicates the result of the inventory quantity export process:

|

| Error |

Error message, if any. For example, a message is written when the job status is reset, for example: Running job status was reset by user SYSTEM on 11/28/2021 04:29 PM. Up to 255 positions. See Fulfilled Inventory Export for background and troubleshooting information. |

| Inventory Records |

The total number of inventory quantity records in the export. |

| Inventory Errors |

The total number of inventory quantity records that failed to export. See Fulfilled Inventory Export for background and troubleshooting information. |

Product Imports History

Purpose: Use the Product Imports History screen to review summary information on the product, product location, location, and UPC imports that have taken place for a system. See Importing Items/Products, Inventory, Barcodes, Images, and Locations into the Database for an overview of the import process.

Used for the Routing Engine module.

How to display this screen: Click the history icon (![]() ) next

to a Product Import entry for a system at the View Active Schedules screen.

) next

to a Product Import entry for a system at the View Active Schedules screen.

Note:

- Available if Use Routing Engine is selected at the Tenant screen. Only users with View Active Scheduled authority can display this screen. See Roles for more information.

- Available only if the Product Import is currently enabled, or if there are any product import records that have not been purged.

- If the Product Imports History screen was already open in another tab when you clicked the history icon, you advance to this screen with the product imports history of the previously-selected organization and system displayed.

- History is retained for the number of days specified in the Job History retention setting at the Tenant-Admin screen.

Reviewing import errors:

- Reports: Use the Location Import Errors Report, Product Import Errors Report, and Product Barcode Import Errors Report to review any errors that occurred related to location, product, or barcode imports.

- Error files: Errors related to product location imports are tracked in an import error flat file that is available through the file storage API. See Product Location Import Error Files for more information.

Note:

Some errors that can occur from the data in the import file are not written to the related import database table, so in that case the error is noted only in the error file or record, and not on the related report, for example: an invalid number of columns in the import file, a numeric field that contains alphabetical data, or a date that is not formatted correctly.Options at this screen

| Option | Procedure |

|---|---|

| Search for an import process |

Use the Job Number, Start Date, or Status fields to restrict the displayed imports to those that match your entries and click Search:

Note: You cannot select a status of Paused; however, a job stays in this status only briefly when it switches to another server to complete running. |

| Understand general errors |

If an error occurred for an import process itself, it is displayed in the Error field. |

Fields at this screen

| Field | Description |

|---|---|

| Summary fields: | |

| Organization |

The code identifying the organization associated with the system that generated the import process. The organization description is to the right of the organization code, separated by a hyphen. |

| System |

The code identifying the system that generated the import process, either because you ran it on demand from the Schedule Jobs screen, or it was scheduled at that screen. This is the system you selected at the Schedule Jobs screen. The system description is to the right of the system code, separated by a hyphen. |

| Job Number |

A unique ID number assigned by Order Orchestration to identify an import process. Optionally, enter a number and click Search to display this import process only. |

| Search fields: | |

| Start Date |

Optionally, enter a date to display imports that started on that date. |

| Status |

Optionally, select a status of:

|

| Results fields: | |

| Job Number |

A unique ID number assigned by Order Orchestration to identify an import process. Note: An import is associated with a single job number, regardless of whether Order Orchestration breaks it out into multiple batches for processing. |

| Start Date |

The date and time when the import process started. |

| End Date |

The date and time when the import process ended. |

| Duration |

The amount of time, in minutes and seconds, that the import process ran. HH:MM:SS format, but displayed in MM:SS format if the import took less than an hour. |

| Submitted By |

Indicates how to import was submitted. This could be the ID of the user who submitted or scheduled the process, or the client ID used to authenticate the run job API request message. SYSTEM is displayed here if the job ran successfully through a schedule; however, if a scheduled job was rejected, the ID of the user who scheduled the job is displayed. |

| Status |

Indicates whether any product or product location errors occurred for the import process:

|

| Error |

Indicates the general error, if any, that prevented the product import from taking place. If this field indicates Error importing file. See error file for details, this could indicate that at least one of the import files included invalid data, such as:

In this case, the process moves the file to the error container in the FILE_STORAGE table. This field indicates Error importing from OCDS. See error log for details if errors occurred during OCDS or Merchandising Omni Services Imports. See that help topic for more information. Note: General errors are not included in the Product Import Errors Report, and Product Barcode Import Errors Report, in the case of the Location Import Errors Report, some general errors are included on the report with the description Location Import Failed - Other Error. |

| Type |

Indicates that the top row for the following columns lists the number of records Processed, while the bottom row for the following columns lists the number of records Errored. |

| Product Records |

Top row: The total number of product records included in the import process, including any records that were in error. Bottom row: The total number of product import records that were in error. If there were any errors, you can use the Product Import Errors Report to review them. |

| Inventory Records |

Top row: The total number of product location records that included in the import process, including any records that were in error. Bottom row: The total number of product location import records that were in error. See Product Location Import Error Files for more information. |

| Location Records |

Top row: The total number of location records included in the import process, including any records that were in error. Bottom row: The total number of location import records that were in error. If there were any errors, you can use the Location Import Errors Report to review them. |

| UPC Records |

Top row: The total number of product UPC barcode records included in the import process, including any records that were in error. Bottom row: The total number of product UPC barcode import records that were in error. If there were any errors, you can use the Product Barcode Import Errors Report to review them. |

| Image Records |

Top row: The total number of product image records included in the import process, including any records that were in error. Product images are displayed in Store Connect. Bottom row: The total number of product image import records that were in error. A product import image record could be in error because the URL for the image was not formatted correctly. See Importing Items/Products, Inventory, Barcodes, Images, and Locations into the Database for an overview. |

Sales Order Data Extract Job History

Purpose: Use the Sales Order Data Extract Job History screen to review summary information on the sales order data extracts that have taken place for an organization.

Used for the Routing Engine module.

How to display this screen: Click the history icon (![]() ) for

an organization at the View Active Schedules screen.

) for

an organization at the View Active Schedules screen.

Note:

- Only users with View Active Scheduled authority can display this screen. See Roles for more information.

- Available only if the Sales Order Data Extract is currently enabled, or if there are any data extract records that have not been purged.

- If the Sales Order Data Extract Job History screen was already open in another tab when you clicked the history icon, you advance to this screen with the order data extract history of the previously-selected organization displayed.

- History is retained for the number of days specified in the Job History retention setting at the Tenant-Admin screen.

Maximum number of orders: The extract fails if the total number of orders to extract exceeds 500,000. If this occurs, use the Extract Orders with Activity From and To at the Schedule Jobs screen to run the extract for smaller segments by specifying date ranges to include each time, rather than including all orders.

For more information: See the Sales Order Data Extract for information on generating the extract, and see Sales Order Data Extract Files for information on the data included in the extract files.

Options at this screen

| Option | Procedure |

|---|---|

| Search for an extract process |

Use the Job Number, Start Date, or Status fields to restrict the displayed extract to those that match your entries and click Search:

|

| Understand general errors |

If an error occurred for an extract process itself, it is displayed in the Error field. This might occur if, for example, the number of orders to extract exceeded the limit of 500,000. |

Fields at this screen

| Field | Description |

|---|---|

| Summary fields: | |

| Organization |

The code identifying the organization that generated the extract process, and the description of the organization. |

| Search fields: | |

| Job Number |

A unique ID number assigned by Order Orchestration to identify an export job. Optionally, enter a number and click Search to display this export process only. |

| Start Date |

Optionally, enter a date to display extracts that started on that date. |

| Status |

Optionally, select a status of:

|

| Results fields: |

Note: History is retained for the number of days specified in the Job History retention setting at the Tenant-Admin screen. |

| Job Number |

A unique number assigned by Order Orchestration to identify an export process. |

| Start Date |

The date and time when the export process started. |

| End Date |

The date and time when the export process ended. |

| Duration |

The amount of time it took for the export to run. HH:MM:SS format, but displayed in MM:SS format if the export took less than an hour. |

| Submitted By |

Indicates how the export was submitted. This could be the ID of the user who submitted or scheduled the process, or the client ID used to authenticate the run job API request message. SYSTEM is displayed here if the job ran successfully through a schedule; however, if a scheduled job was rejected, the ID of the user who scheduled the job is displayed. |

| Status |

Indicates whether any errors occurred for the export process:

|

| Error |

The error that occurred, if any. |

| Order Records |

The total number of order records included in the export process. This total can include both sales orders and purchase orders. |

View Job History

Purpose: Use the View Job History screen to review jobs that have run.

History icon always shown: The history icon to review job history is available for all jobs listed on this screen, regardless of whether the Schedule Enabled flag at the Schedule Jobs screen is selected for the job. However, history is available for review only if the job has run at least once, and it has any history records that have not been purged.

The screen displays up to 100 job history records in reverse chronological order (newest to oldest). If necessary, use the search fields at the top of the screen to restrict the displayed job history records.

How to display this screen: Select View Job History from the Systems Menu. The landing page does not have a shortcut to this screen.

Note:

Only users with View Job History authority can display this screen. See Roles for more information.History screens for individual jobs: You can also use the

history icon (![]() ) at the View Active Schedules screen

to advance to a screen displaying all unpurged history records for

the selected job. See that screen for more information.

) at the View Active Schedules screen

to advance to a screen displaying all unpurged history records for

the selected job. See that screen for more information.

History is retained for the number of days specified in the Job History retention setting at the Tenant-Admin screen.

Options at this screen

| Option | Procedure |

|---|---|

| search for a job history record |

Optionally, select a Job Name, Organization, and/or System, Start Date, or End Date, and click Search to display the scheduled job. Note: Searching by organization or system is supported only for jobs that are specific to an organization or system. See the Job Name field a summary description of each job. |

| refresh the displayed information |

Click Search. |

Fields at this screen

| Field | Description |

|---|---|

| Search fields: | |

| Job Name |

A job that has run at least once, and not all history has been purged. Optionally, select a listed job and select Search to display the history records for that job. Jobs that can be displayed here are: Auto Cancel Unclaimed Pickup Orders: Cancels unclaimed pickup or ship-for-pickup orders based on the settings of the Auto Cancel Days of Unclaimed Pickup Orders and Auto Cancel Days of Unclaimed Ship For Pickup Orders at the Preferences screen. This job runs daily at a specified time. See Auto-Cancel Unclaimed Orders for a discussion. Completed Order Private Data Purge: Anonymizes customer data on sales orders that closed (fulfilled, canceled, unfulfillable, completed) and purchase orders that have been closed (shipped, complete, or canceled) and that are older than a specified number of days. See Completed Order Private Data Purge for a discussion. Daily Clean Up: Clears outdated information on a daily basis. See the Daily Clean Up job for details. This job runs daily at a specified time. Email Notifications: Generates email notifications to store locations, vendors, customers, retailers, or systems operations staff based on the unprocessed records that are currently in the EMAIL_NOTIFICATION table. This job runs at the specified minute interval: for example, generate email notifications every 5 minutes. See the Email Notifications job for details. Fulfilled Quantity Export: Generates a pipe-delimited file of recent order fulfillments, so the inventory system of record can use this information to update its own inventory based on activity in Order Orchestration. See the Fulfilled Inventory Export job for details. Generate Pickup Ready Reminder Emails: Generates pickup-ready reminder emails to customers whose pickup orders have not been picked up within the number of Aged Hours defined for the job. See the Generate Pickup Ready Reminder Emails job for details. Identity Cloud Service Synchronization: Creates users in Order Orchestration based on the data that has been set up in IDCS or OCI IAM. See the Identity Cloud User Synchronization job for details. Incremental Inventory Import: Uses the contents of a pipe-delimited import file to update product location records for the system. See the Incremental Inventory Import job for details. Inventory Quantity Export: Creates a pipe-delimited export file providing the current totals for products or product locations based on updates to product location quantities, including applying probable quantity or probability rules. Also, supports a web service to respond to requests for current inventory quantity changes based on probability rules. See the Inventory Quantity Export job for details. Product Import: Imports and updates information that includes products, system products, product locations, locations, and product barcodes. See the Product Import job for details. Sales Order Data Extract: Creates export files containing data related to orders with any activity within a specified date range. See the Sales Order Data Extract for details. Jobs that are not flagged as enabled at the Schedule Jobs screen are not listed in the results here. |

| Organization |

See organization. From the Default Organization defined through the Users screen, but you can override it. Filtering displayed jobs based on organization takes place only for the jobs that are associated with a specific organization: these are the Probable Quantity Export, Fulfilled Quantity Export, Product Import, Probable Quantity Export, and Incremental Inventory Import, and Sales Order Data Extract. |

| System |

See system. Optionally, select a system the drop down list in order to display jobs run for the selected system. If you first select an Organization, only systems associated with that organization are displayed. Filtering jobs based on organization takes place only for the jobs that are associated with a specific system: these are the Probable Quantity Export, Fulfilled Quantity Export, Product Import, Probable Quantity Export, and Incremental Inventory Import. |

| Start Date |

The date and time when the job started. |

| End Date |

The date and time when the job ended. |

| Results fields: | |

| Job Name |

See the Job Name, above, for information. |

| Job Number |

A unique ID number assigned by Order Orchestration to identify a job. Note: A job is associated with a single job number, regardless of whether Order Orchestration breaks it out into multiple batches for processing. |

| Organization |

The organization code is displayed only for jobs related to a specific organization: these are the Probable Quantity Export, Fulfilled Quantity Export, Product Import, Probable Quantity Export, and Incremental Inventory Import, and Sales Order Data Extract. |

| System |

The code identifying the system associated with the scheduled job. The system code is displayed only for those jobs related to a specific system: these are the Probable Quantity Export, Fulfilled Quantity Export, Product Import, Probable Quantity Export, and Incremental Inventory Import. |

| System Name |

The name describing the system. |

| Start Date |

The date and time when the job started. |

| End Date |

The date and time when the job ended. |

| Duration |

The number of minutes and seconds it took the job to run, in MM:SS format. Displayed only for the Incremental Inventory Import, Product Import, and Sales Order Extract. |

| Submitted By |

Indicates how the job was submitted. This could be the ID of the user who submitted or scheduled the process, or the client ID used to authenticate the run job API request message. SYSTEM is displayed as the user ID if the job ran successfully through a schedule; however, if a scheduled job was rejected, the ID of the user who scheduled the job is displayed. |

| Status |

Indicates the result of the most recent import process:

This field is blank if you have not yet run the import process for the system, or if the job is anything other than the Incremental Inventory Import, Product Import, Inventory Quantity Export, or Sales Order Data Extract. |

Schedule Jobs

Purpose: Use the Schedule Jobs screen to work with scheduled jobs.

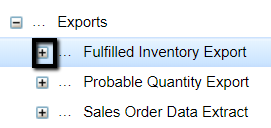

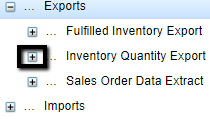

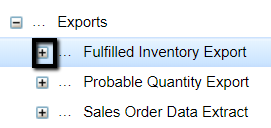

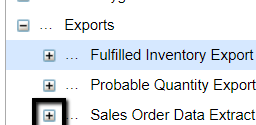

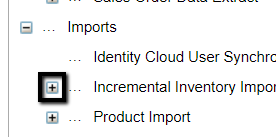

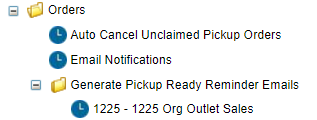

Displaying the jobs available to schedule: Use the folders on the left-hand pane to display the related jobs available for scheduling:

- Data Hygiene folder: Includes the Completed Order Private Data Purge job and the Daily Clean Up job.

- Exports folder: Includes the Fulfilled Inventory Export, Inventory Quantity Export, and Sales Order Data Extract jobs.

- Imports folder: Includes the Identity Cloud User Synchronization, Incremental Inventory Import, and Product Import jobs.

- Orders folder: Includes the Auto Cancel Unclaimed Pickup Orders job, the Email Notifications job, and the Generate Pickup Ready Reminder Emails job.

Highlight a job in the left-hand pane to display schedule information and options in the right-hand area.

See each job below for more information.

Resolving scheduling issues: The Reschedule All option at the View Active Schedules screen stops and restarts the schedules for all jobs in the case of an unexpected interruption. Also, you use this option to start running all scheduled jobs and programs when first configuring Order Orchestration, or after an upgrade is applied.

Note that the Reschedule All option does not restart jobs

that are in Paused status (![]() ). Jobs

stay in Paused status only briefly before Order Orchestration restarts

them automatically.

). Jobs

stay in Paused status only briefly before Order Orchestration restarts

them automatically.

Note:

Do not attempt to schedule jobs before creating systems.Dates and times: The dates and times are based on the retailer’s time, which may be different from your local time zone. As a result, you need to calculate the difference between your local time and the system time to have the scheduled import take place at the desired time.

Job notifications: If the Event Notifications settings are configured at the Event Logging screen, a job notification message is generated each time one of the scheduled jobs runs. See Event Notifications settings and the Job Notification Messages appendix of the Web Services Guide on My Oracle Support (2953017.1) for more information.

Status email: If a job for a specific system is rejected because a conflicting job was already running, Order Orchestration generates a status email to the Administrative Email specified at the Event Logging screen, indicating:

- System Code: The system submitting the job.

- Blocking System Code: The system that submitted the job that blocked the job.

- Date/Time File Rejected

- Run By: The user ID of the person who submitted the job, or set to SYSTEM if the job was scheduled.

Which jobs conflict? You cannot run any of the following jobs at the same time:

Run Job API: You can also use the Run Job API to submit a job, as an alternative to submitting the job on demand or scheduling it at this screen. See the Web Services Guide on My Oracle Support (2953017.1) for background.

In this topic:

Data Hygiene Folder:

Exports Folder:

Imports Folder:

Orders Folder:

How to display this screen: Select Schedule Jobs from the Systems Menu. The landing page does not have a shortcut to this screen.

Note:

Only users with Schedule Jobs authority can display this screen. See Roles for more information.Completed Order Private Data Purge

The Completed Order Private Data Purge job in the Data Hygiene folder anonymizes customer data on sales orders that closed (fulfilled, canceled, unfulfillable, completed) and purchase orders that have been closed (shipped, complete, or canceled) and that are older than the number of days specified in the Days Old field.

If the same external order number is assigned to multiple sales or purchase orders, each purchase order is purged only if is closed and is older than the retention days. For example, order number 12345 is assigned to two purchase orders created on February 1 and another was created on February 6. If the current date is February 15 and the retention days is set to 10, then the third purchase order cannot yet be purged.

The order’s age is calculated based on the CREATE_TIMESTAMP from the XOM_ORDER table or the CREATED_DATE from the PO_HEADER table. This is the date and time when Order Orchestration created the sales order or purchase order, which might be different from when the order was created in the originating system.

About anonymization: When the customer data is anonymized, all information is replaced with asterisks. Anonymized data cannot be recovered. Data that is anonymized includes sold-to and ship-to customer names, address, email addresses, and phone numbers for sales orders and purchase orders. Even if a field, such as one of the address lines, did not previously contain data, the purge populates the field with asterisks. See Anonymizing Data for a discussion.

A Transaction Note is written for each order line: Private Data Anonymized.

Scheduling the Completed Order Data Purge

- Select the Day of Week when the job should run.

- Enter the Time when the job should run in 24-hour format (HH:MM).

- Enter the Days Old an order or purchase order must be to be eligible for anonymization.

- Select Schedule Enabled.

- Select Save.

- Select Cancel to exit the screen.

Optionally, select Run Now to run the job immediately.

Orders across all organizations are anonymized.

Completed Order Data Purge Fields

- Schedule Enabled

- Schedule Interval: Set to Weekly. Display-only.

- Day of Week

- Time

- Days Old: The number of days old a completed order must be to be eligible for purge.

- Last Updated

- Last Run

- Next Run

History: Use the Completed Order Private Data Purge screen to review completed order data purge jobs that have run.

For more information: See the Web Services Guide on My Oracle Support (2953017.1) for information on web service requests that support inquiring on private data and requesting to anonymize it.

Daily Clean Up

The Daily Clean Up job in the Daily Hygiene folder clears outdated information, including:

- Pack slip records generated through the Vendor Portal, after the number of days specified in the Pack Slip Files field under Retention Settings at the Tenant-Admin screen.

- Reports, after the number of days specified in the File Storage field under Retention Settings at the Tenant-Admin screen.

- File storage records, after the number of days specified in the File Storage field under Retention Settings at the Tenant-Admin screen.

- Pack slip records generated through Store Connect, after one day.

- Shipping label records generated for integrated shipping with ADSI, in either the Vendor Portal or Store Connect, after one day.

- Email notification records, after three days.

- Product import error files and part files, after the number of days specified in the Product Import Error Files field under Retention Settings at the Tenant-Admin screen, if Cloud Storage is used.

-

Records in the RICS_LOG table of messages between Order Orchestration and Oracle Retail Integration Cloud Service (RICS), based on the number of days specified in the RICS Log History field under Retention Settings at the Tenant-Admin screen. See Order Fulfillment through RICS Integration for background.

RICS log records whose Retry Status is Failed are not eligible to be purged.

- Job history records which are older than the Job History setting at the Tenant-Admin screen. See the View Job History screen to review job history.

- Audit records for audited tables that have exceeded the audit retention days specified in the CTL_APP_CONFIG table in the database. The retention days is set 183 days (6 months) by default, and is not displayed on any screen. The audited tables include Preferences, Preference Overrides, Drop Ship Preferences, Job Schedule, System, and Web Service Users. Contact your Oracle support representative if the retention setting needs to be changed.

-

Shopping logic trace records shopping logic trace records for closed, completed, canceled, and unfulfillable orders, when the records are older than the number of days specified in the Trace Log History field under Retention Settings at the Tenant-Admin screen. screen, if shopping logic tracing is enabled; see Trace Shopping Log for background.

-

Records in the XOM_ITEM_DUPLICATE table that are older than 180 days. This table contains a record for each order line that was not created in Order Orchestration because a duplicate was found. Duplicate records are retained for 180 days for troubleshooting by Oracle Support.

-

Records in the XOM_ITEM_DUP_CHECK table that are older than 2 days. This table contains a record of each submitted order, which is used temporarily only for the duplicate checking process before order creation.

Scheduling the Daily Clean Up Job

- Enter the Time in 24-hour format (HH:MM) when the job should run.

- Optionally, select Schedule Enabled.

-

Optionally, select Run Now to run the job immediately.

- Select Save.

- Select Cancel to exit the screen.

Daily Clean Up Fields

- Schedule Enabled

- Schedule Interval : Set to Daily. Display-only.

- Time

- Last Updated

- Last Run

- Next Run

Daily Cleanup History: Use the Daily Clean Up Job History screen to review daily cleanup jobs that have run.

For more information: See the Tenant-Admin screen for information on Retention Settings fields.

Fulfilled Inventory Export

Purpose: Use the Fulfilled Inventory Export to generate a pipe-delimited file of recent order fulfillments, so the inventory system of record can use this information to update its own inventory based on activity in Order Orchestration.

Export updates: The export program:

-

identifies each order line within the system since the last time the export was run, based on the export update date and time in the xom_status_history table:

- delivery and pickup orders: the order line assigned to the location for fulfillment has gone into fulfilled status

- ship-for-pickup orders: the order line assigned to the location for sourcing (transferring or shipping the item to the pickup location) has gone into intransit status

- for each order line whose fulfilled or intransit quantity was included in the export, updates the export update date and time in the xom_status_history table

-

for each product location included in the export:

- if the Track Fulfilled Quantity setting is Reset During Inventory Export, sets the Fulfilled Quantity to 0

- decreases the Available Quantity by the total quantity of fulfilled order lines included in the export, based on the quantity from the xom_status_history table

- updates the Last Updated Date for the product location

- generates the export file, creating the export record in the FILE_STORAGE table. The CONTAINER setting for the record is OROB-EXPORT. You can use the File Storage API to download export file records from the FILE_STORAGE table. See File Storage API for Imports and Exports for details.

Fulfilled Inventory Export History: Use the Fulfilled Inventory Export History screen to review fulfilled inventory exports that have run.

For more information: See the Fulfilled Inventory Export File.

Fulfilled quantity used in availability calculation: Both the Reserved Quantity and the Fulfilled Quantity are subtracted from the product location’s Available Quantity when calculating the Available to Promise quantity. See Calculating the Available to Promise Quantity for an overview.

Typically, you would set the Track Fulfilled Quantity field at the System screen to Reset During Inventory Export. See that field.

Fulfilled Inventory Export File

- File format: The file is pipe-delimited (|).

- File location: The FILE_STORAGE table.

- File naming: Named FULFILLED_QUANTITY_EXTRACT_SYSCD_220831_165819.csv, where SYSCD is the code for the system, and 150831_165819 the date (August 31, 2022) and time when the file was created, in the retailer’s time.

File contents:

- Location code: The code identifying the location that shipped the delivery order, where the pickup order was picked up, or that shipped or transferred the ship-for-pickup order.

- Order type: Either DELIVERY, PICKUP, SHIPFORPICKUP.

- Order number: The number or code identifying the order in the originating system.

- System product code: The number or code identifying the item in the fulfilling system.

- Quantity fulfilled: The quantity of the item shipped, picked up, or in transit.

- Unit price: The unit price of the item on the order.

- Date and time: The date and time when the item was shipped or picked up. YYYY-MM-DDTHH:MM:SS:XXX format, where XXX is milliseconds (for example, 2022-11-15T16:15:34.710).

Scheduling the Fulfilled Inventory Export

- Click the plus sign next to the Fulfilled Inventory Export job in the left-hand panel to display a list of existing organizations.

Note:

The list of organizations is available only if Use Routing Engine is selected at the Tenant-Admin screen.- Click the organization whose fulfilled inventory data should be extracted. When you select an organization, the systems within the organization are displayed.

- When you select an organization, the Fulfilled Inventory Export Fields are displayed to the right.

- Select one or more Days of Week when the job should run.

- Use the Time field to enter each time when the job should run, in 24-hour format (HH:MM). If entering multiple times, separate each with a comma and no spaces.

- Optionally, select Run Now to run the job immediately.

- Optionally, select Schedule Enabled.

- Select Save.

- Select Cancel to exit the screen.

Fulfilled Inventory Export Fields

- organization

- system

- Schedule Enabled

- Schedule Interval: Set to Day(s) of Week. Display-only.

- Time

- Run Now

Job Summary

Inventory Quantity Export

The different options for calculating the available quantity for inventory export include using probable quantity rules; using probability rules; and not using rules. The required settings for each are described below.

Important:

Configure the export for only one system in your organization to support the export for all eligible systems within the organization. The selected system does not need to be the organization default.Difference between probable quantity rules and probability rules: Probable quantity rules are used only to calculate the probable quantity to pass to an integrated system, such as an ecommerce site, while probability rules apply dynamically to determine the available quantity when Order Orchestration receives a request, such as a Submit Order request or a Locate Items request.

Use probability rules for the inventory quantity update to provide a consistent calculation both interactively and through the batch updates described here.

- Typical Inventory Quantity Export Usage

- Scheduling the Inventory Export

- Inventory Quantity Rules Settings

- Summary of Inventory Quantity Export Fields

- Probability Rules Update and Incremental Quantity Web Service

- Inventory Quantity Export Using Available to Promise Quantity (No Rules)

Typical Inventory Quantity Export Usage

An example of how you might use the inventory quantity export would be an ecommerce system that requires an estimate of availability for display at the ecommerce site:

-

System A is your ecommerce system. For this system:

- The Inventory Qty Export flag is not selected, because the system does not require its own product locations in the export file.

- At the Inventory Quantity Export settings at the Schedule Jobs screen, the Enabled flag is selected, a schedule is defined, and a Safe Stock Method method is selected.

- Systems B and C are additional systems in your organization that can fulfill orders. For these systems, the Inventory Qty Export flag is selected, because updated inventory should be included in the inventory quantity export file.

- If the Safe Stock Method is set to Probable Quantity Rules or No Rules, the inventory quantity export runs when scheduled for system A, and includes all product locations in systems B and C that have been updated since the last inventory quantity export for system A.

-

If the Safe Stock Method is set to Probability Rules:

- Whenever Order Orchestration receives the inventory count web service request, it responds with the requested number of updated product location records since the most recent request.

- The inventory quantity export runs when scheduled for system A, and includes all product locations in systems B and C that have an available quantity greater than zero.

- The calculation of the quantities in the export file, and the web service response, when using probability rules, is based on the selected Safe Stock Method.

Note:

The Default Unfulfillable Location is not included in the inventory quantity export.See below for more details.

Pausing inventory updates through RICS: If Available-to-Sell Individual Inventory Updates through Oracle Retail Integration Cloud Service (RICS) are enabled, these updates need to pause during the inventory export process in order to prevent database contention. To support this requirement, if the Online flag at the RICS Integration tab on the System screen is selected:

- When the inventory quantity export begins, it first sends a stop request to RICS.

- Then when the export completes, it sends a start request to RICS.

The message is sent to the URL specified for the Orders Service at the RICS Integration tab on the System screen.

Note:

To support sending the stop and start requests, the User ID specified for the Orders Service at the Add or Edit window, available from the External Services screen, needs to have the Operator or Admin role in RIB.Maximum number of records exported? When the export is generated through the getInventoryQuantity request message, the export file includes up to the number of records specified for the inventory.qty.export.max.threshold in the CTL_APP_CONFIG table. This threshold is set to 500 by default. See the Web Services Guide on My Oracle Support (2953017.1) for more information.

Export file placement: The export creates the export file in the FILE_STORAGE table. The CONTAINER setting for the record is OROB-EXPORT. You can use the File Storage API to download export file records from the FILE_STORAGE table.

Inventory Quantity Export History: Use the Inventory Quantity Export History screen to review inventory quantity exports that have run.

Scheduling the Inventory Export

- Click the plus sign next to the Inventory Export job in the left-hand

panel to display a list of existing organizations.

Note:

The list of organizations is available only if Use Routing Engine is selected at the Tenant-Admin screen. - Click the organization whose inventory quantity data should be

exported. When you select an organization, the systems within the

organization are displayed.

Note:

When the Safe Stock Method is set to Probability Rules, you cannot schedule the job to run more than once a day. With this setting, the system can instead receive incremental inventory updates through the Get Inventory Quantity web service, described in the Web Services Guide on My Oracle Support (2953017.1). - Use the Days of Week to select each day when the export job should run.

-

Use the Time field to enter each time when the job should run, in 24-hour format (HH:MM). If entering multiple times, separate each with a comma and no spaces.

Important:

To avoid a system slow-down or degrading performance, do not run the Inventory Quantity Extract more than once a day or during peak processing time. - When you select a system in the organization, the Summary of Inventory Quantity Export Fields are displayed to the right. See the Inventory Quantity Rules Settings for details on the Safe Stock Method, File Output Type, and Incremental Updates fields, and see Inventory Quantity Rules Settings, below, for details on configuration options.

- Optionally, select Schedule Enabled.

- Select Save.

- Select Cancel to exit the screen.

Inventory Quantity Rule Settings

Probability Rules Settings:

- Overview: Provides incremental updates to an external system through a web service, applying probability rules set up through the Probability Rules and Probability Location screens, as well as a pipe-delimited file containing a full overlay of all product locations where the available quantity is greater than zero.

- Configuration:

- Safe Stock Method: Set to Probability Rules.

- File Output Type: Set to Full Overlay, and cannot be changed.

- Incremental Updates: Set to Probability Rules to enable a background process to perform the probability calculation and queue updated product location records to return in the response to the inventory quantity web service; otherwise, set to No Update.

Additional configuration requirements:

- Select the Inventory Qty Export flag at the Inventory tab of the System screen for each system that should have product locations included in the export.

- Set the PQE Startup Threads at the Tenant-Admin screen to a number from 1 to 100 to enable the background process that performs the probable quantity calculation.

- If needed, create the web service user ID to authenticate the incremental update web service for the Admin web service; see Web Service User for background.

For more information: See Probability Rules Update and Incremental Quantity Web Service. Also, see the RESTful Get Inventory Quantity Updates chapter in the Web Services Guide on My Oracle Support (2953017.1) for information on the web service that returns incremental inventory quantity updates.

Probable Quantity Rules Settings:

- Overview: Provides a pipe-delimited file that includes either totals aggregated by product or all changed records for individual product locations after applying probable location rules, set up through the Probable Quantity Rules and Probable Quantity Location screens.

-

Configuration:

- Safe Stock Method: Set to Probable Quantity Rules.

- File Output Type: Set to either Aggregate by Product or Changed Records. See Aggregate by Product for a discussion.

- Incremental Updates: Set to No Update, and cannot be changed.

For more information: See Probable Quantity Update and Export.

No Rules Settings:

- Overview: Provides a pipe-delimited file that includes either totals aggregated by product or all changed records for individual product locations after applying probable location rules.

-

Configuration:

- Safe Stock Method: Set to No Rules.

- File Output Type: Set to either Aggregate by Product or Changed Records. See Aggregate by Product for a discussion.

- Incremental Updates: Set to No Update, and cannot be changed. Only the pipe-delimited file is available.

For more information: See the Inventory Quantity Export Using Available to Promise Quantity (No Rules).

Summary of Inventory Quantity Export Fields

- Schedule Enabled

- Schedule Interval: Set to Day(s) of Week. Display-only.

- Time

- Safe Stock Method

- File Output Type

- Incremental Updates

For more information: See the Tenant-Admin screen for information on Retention Settings fields.

Probability Rules Update and Incremental Quantity Web Service

Required configuration: See Inventory Quantity Rules Settings for details on configuring the inventory quantity export for probability rules and to support the Get Inventory Quantity Updates web service to support incremental updates.

Difference between probable quantity rules and probability rules: Probable quantity rules are used to calculate the probable quantity to pass to an integrated system, such as an ecommerce site, while probability rules apply dynamically to determine the available quantity when Order Orchestration receives a request, such as a Submit Order request or a Locate Items request.

Tracking Probability Rules for Incremental Updates on Inventory Availability

The probability rule export uses a background process that evaluates changes that might affect the quantity that is expected to be available in product locations. This information is queued in the database so that Order Orchestration can send current information, including the new “probable quantity” based on probability rules, for updated product locations, when requested by an integrating system. This job runs if:

- Both the Safe Stock Method and the Incremental Updates are set to Probability Rules, and

- The PQE Startup Threads at the Tenant-Admin screen is set to any number from 1 to 100.

Note that the field returns in the web service is labeled as the probable quantity, but it is a projected inventory count based on probability rules, not on probable quantity rules.

Which systems? The background process evaluates product locations for all systems within the organization that have the Inventory Qty Export flag at the Inventory tab of the System screen selected.

Which updates trigger probability rule calculation? The background process monitors the following data that could be factors in applying probability rules, and calculates the updated quantity to return in the inventory quantity web service.